Anthropic Faces Government Seizure Threats over Claude 4.5 Safety Rails

The Department of War has threatened to designate Anthropic as a "supply chain risk," a label typically reserved for foreign adversaries (Source: Statement from Dario Amodei, Feb 2026). This escalatio

The Pitch

Anthropic is locked in a high-stakes standoff with the US Department of War over the safety architecture of Claude 4.5 Opus. While the lab maintains its "constitutional" red lines against lethal autonomous weaponry, the government is threatening to invoke the Defense Production Act to force compliance. See Claude profile

Under the Hood

The Department of War has threatened to designate Anthropic as a "supply chain risk," a label typically reserved for foreign adversaries (Source: Statement from Dario Amodei, Feb 2026). This escalation stems from Anthropic's refusal to remove safeguards preventing Claude 4.5 from being integrated into lethal autonomous systems or mass surveillance tools.

The industry response has been unusually unified. Employees from Google and OpenAI have launched a cross-industry open letter, "We Will Not Be Divided," in solidarity with Anthropic's stance (Source: notdivided.org). Calling a company both "essential infrastructure" and a "security risk" is the kind of logical gymnastics usually reserved for junior devs explaining why their PR broke the build.

However, the "principled" stance has visible cracks. Critics point out that Anthropic's refusal to support "fully autonomous weapons" is framed as a temporary technical limitation rather than a permanent ethical ban (Source: UsedBy Dossier). Furthermore, the company’s language specifically targets "domestic mass surveillance," which leaves a convenient back door for foreign intelligence applications (Source: HN Analysis).

There are critical gaps in the public record. We don't know the exact technical definitions of the "red lines" Anthropic refuses to cross, nor has the Department of War issued a formal rebuttal to the "Supply Chain Risk" label. We also lack a timeline for when Claude 4.5 is projected to reach the "reliability" threshold required for the autonomous systems mentioned in government discussions.

Despite these legal headwinds, the model's enterprise adoption remains robust. Anthropic currently serves 247 major clients, with Notion, DuckDuckGo, and Quora maintaining their production deployments on the platform (Source: UsedBy Internal Data).

Marcus's Take

If you are building on Claude 4.5 Opus today, you are betting on Anthropic's legal team as much as their engineering. The threat of the Defense Production Act (DPA) means the weights for Opus could technically become government-controlled assets overnight. This creates a massive business continuity risk for any CTO requiring true data sovereignty. Use it for its superior reasoning, but maintain your GPT-5 or Gemini 2.5 failovers; the sovereignty of your backend shouldn't depend on a game of chicken between a CEO and the Department of War.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

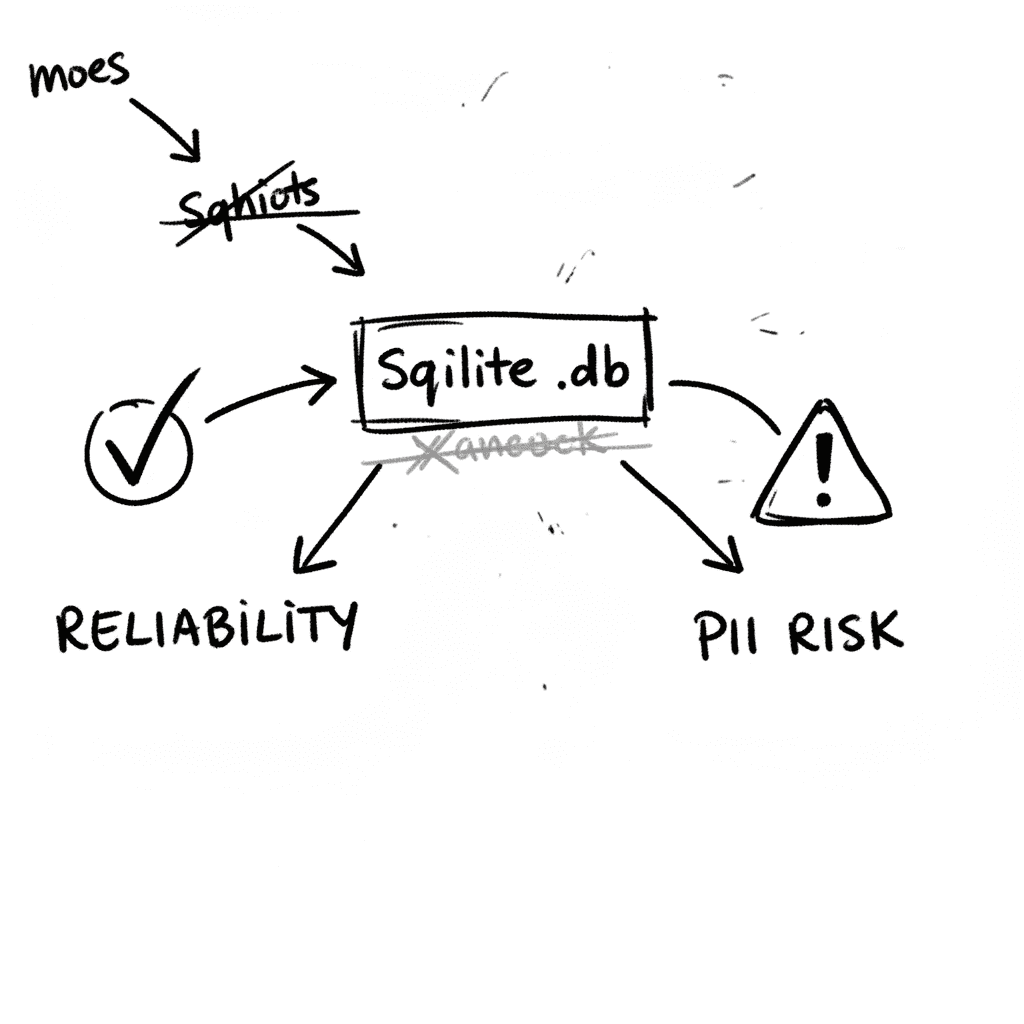

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

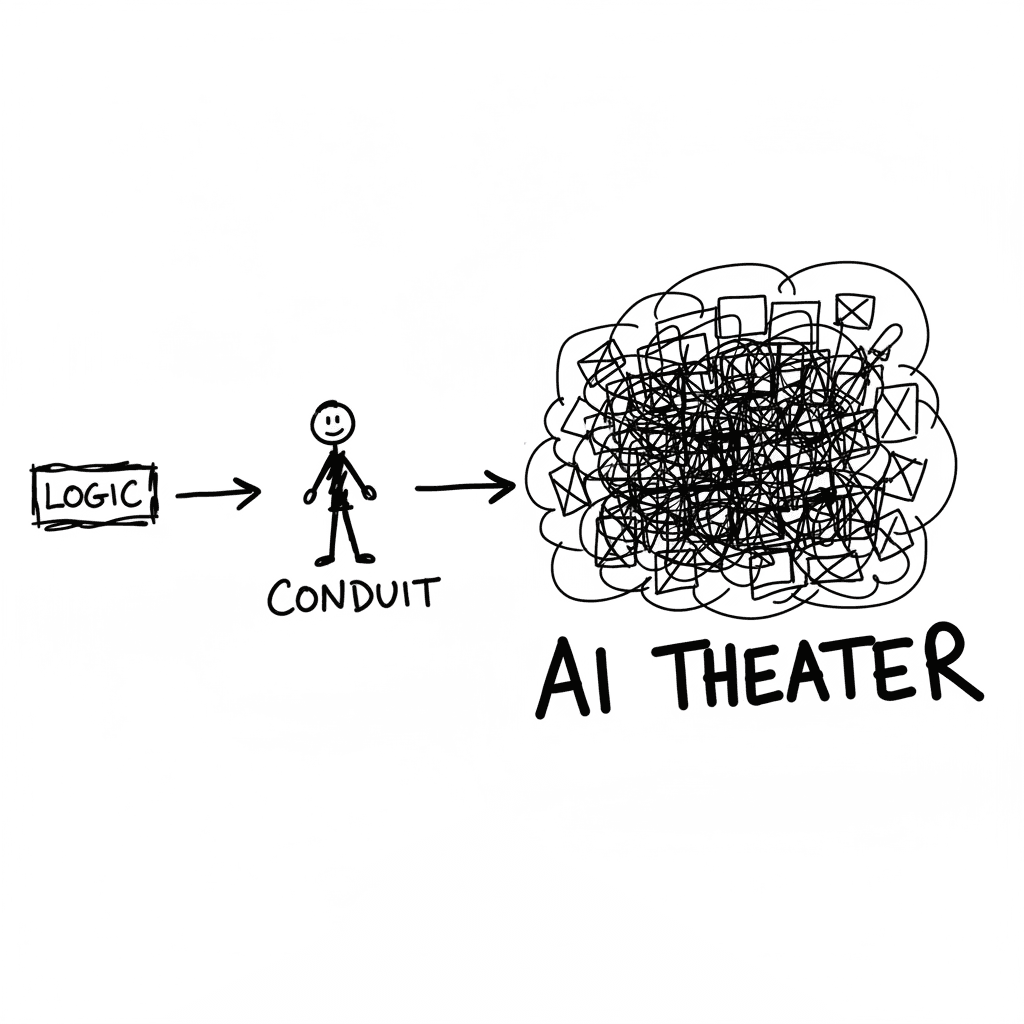

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

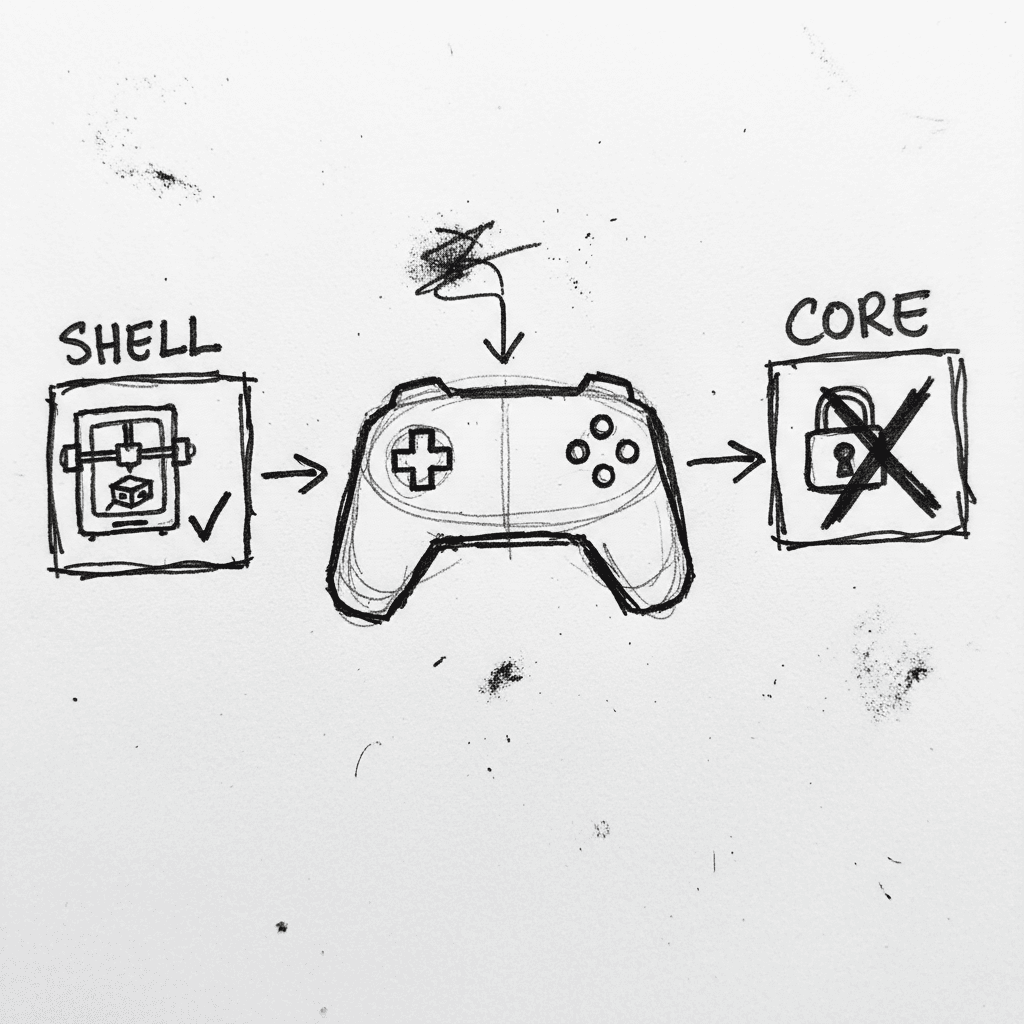

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.