Ars Technica editorial failure confirms persistent AI hallucination risks in 2026

Ars Technica’s editorial workflow failed to detect fabricated quotations generated by LLMs in a high-profile report published in February 2026 (Ars Editor's Note). The publication’s public policy expl

The Pitch

Ars Technica’s editorial workflow failed to detect fabricated quotations generated by LLMs in a high-profile report published in February 2026 (Ars Editor's Note). The publication’s public policy explicitly forbids AI-generated content in news stories unless labelled, asserting that every quotation undergoes human verification (UsedBy Dossier). This failure has reignited debates on Hacker News regarding the reliability of AI extraction tools in professional journalism.

Under the Hood

The incident involved Senior AI Reporter Benj Edwards, who utilised an "experimental Claude Code tool" and ChatGPT (GPT-5) to process source material (Reddit / Tech Investigation). Edwards admitted on Bluesky that he used these models to extract verbatim quotes while ill with COVID. When Claude’s safety filters blocked a specific prompt, the fallback to ChatGPT resulted in the model paraphrasing the source into entirely fabricated direct statements (Bluesky / 404 Media).

The systemic failure highlights a significant lack of editorial oversight at the publication:

- The article carried two bylines (Edwards and Kyle Orland), yet neither author verified the quotes against the original source material.

- Fabricated statements were attributed to source Scott Shambaugh, who eventually flagged the errors publicly (UsedBy Dossier).

- Ars Technica retracted the article on February 15, 2026, admitting it contained "fabricated quotations" (Ars Editor's Note).

- Benj Edwards’ bio was transitioned to the past tense on February 28, 2026, indicating he is no longer with the organisation (Futurism).

- Ars Technica has refused to provide details on the specific technical pipeline or the nature of the reporter's departure (Aurich Lawson).

We don't know yet if this was an isolated event or if previous articles utilised similar AI-assisted extraction methods (HN). Ars Technica deleted the original content and issued a vague apology rather than providing a transparent audit of the internal failure. Despite the capabilities of Claude 4.5 and GPT-5, this case proves that extraction tasks still suffer from "creative" paraphrasing that can resemble factual reporting.

Marcus's Take

This isn't a failure of LLM technology; it is a failure of basic backend validation within the editorial office. Even with the reasoning capabilities of Claude 4.5 or GPT-5, treating an LLM as a deterministic extraction tool for direct quotes is an invitation for high-entropy errors. If you are building a RAG pipeline or a content workflow in 2026, the "trust but verify" mantra is obsolete—it is verify or fail. Ars Technica attempted to automate the most critical part of journalism and discovered that hallucination persistence isn't a bug you can patch out of a reporter’s workflow. It turns out that using an LLM to replace a transcript is a fantastic way to find yourself listed in the past tense by the end of the month.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

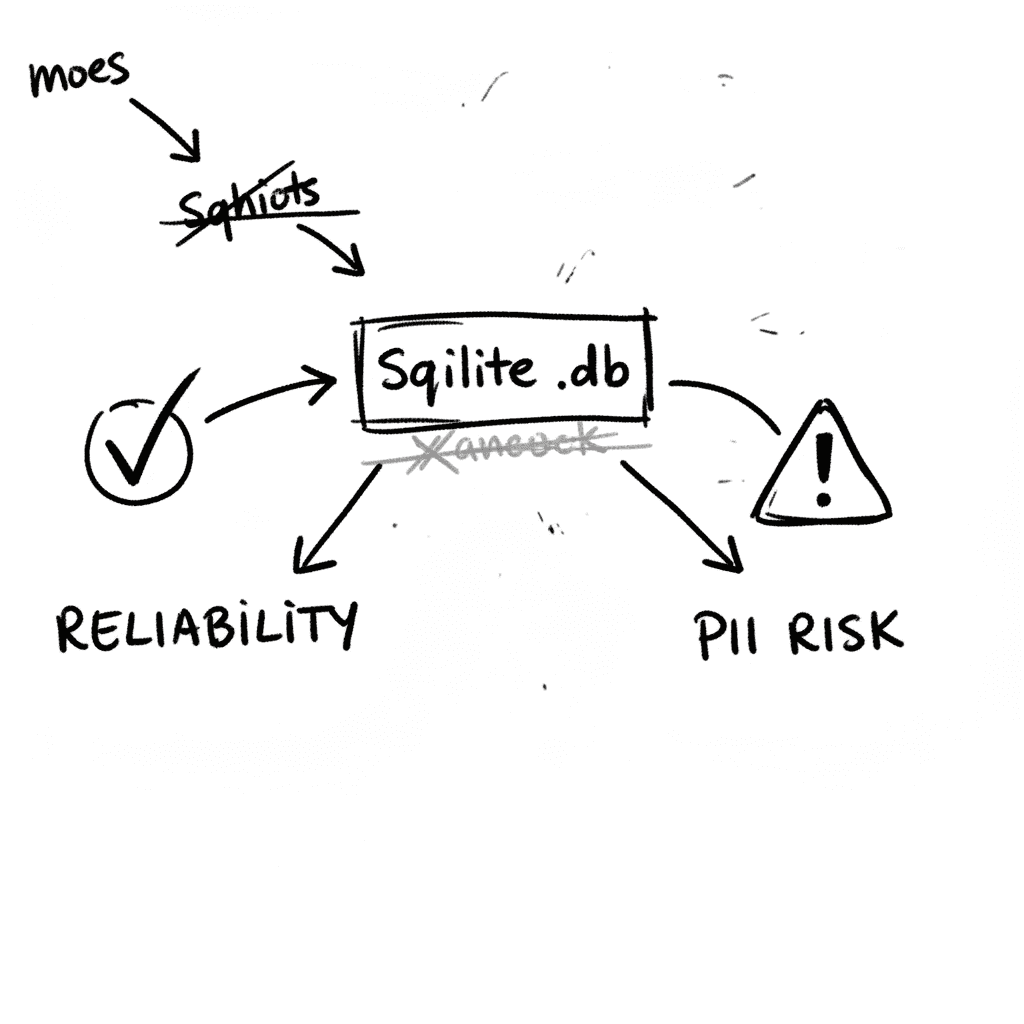

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

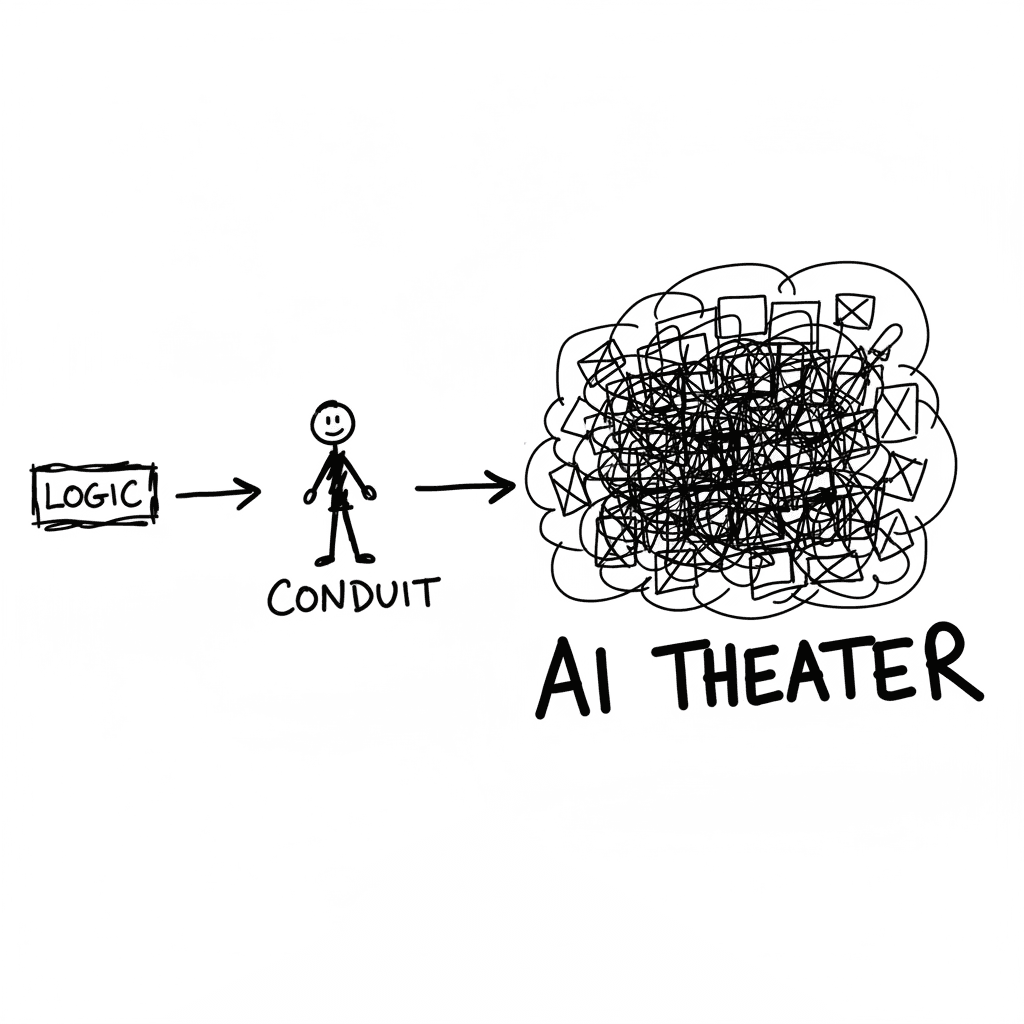

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

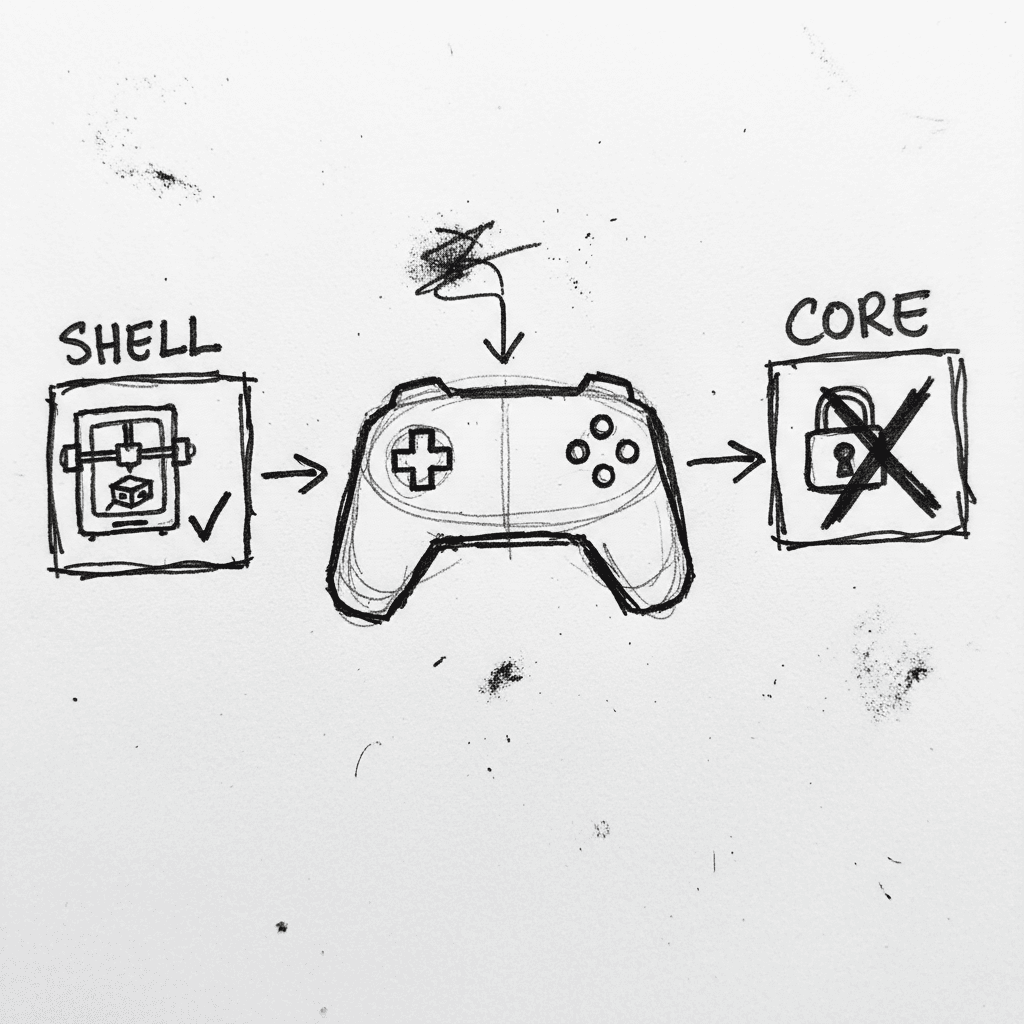

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.