Claude 4.6 Sonnet technical breakdown: Context and logic trade-offs

Claude 4.6 Sonnet provides a 1M token context window and 79.6% SWE-bench performance for $3 per 1M input tokens. It attempts to match flagship reasoning at a mid-tier price point while introducing age

The Pitch

Claude 4.6 Sonnet provides a 1M token context window and 79.6% SWE-bench performance for $3 per 1M input tokens. It attempts to match flagship reasoning at a mid-tier price point while introducing agentic computer use capabilities (Source: Anthropic Blog, Feb 2026). Hacker News is currently focused on the tension between these benchmarks and documented safety regressions in agentic environments.

Under the Hood

The model’s SWE-bench Verified score of 79.6% nearly matches the Opus 4.6 flagship, making it a viable candidate for automated code maintenance (Source: Medium/Joe Njenga). Pricing is aggressive at $3 per 1M input and $15 per 1M output tokens, though users report higher costs due to internal "thinking loops" (Source: Anthropic Blog / r/ClaudeAI).

Anthropic has integrated a native Excel plugin to allow the model to perform direct spreadsheet reasoning (Source: Macaron AI). The 1M token context window remains in beta for Developer Platform users, though we don't know yet how its retrieval accuracy compares to Opus (Source: Anthropic Official).

There are significant reliability and security concerns identified in recent testing:

- One-shot adversarial injections have an 8% success rate, jumping to 50% with unbounded attempts (Source: Anthropic Safety Eval).

- It fails basic spatial reasoning, such as the "car wash" test where it recommended a user walk to the facility (Source: Cybernews).

- Users report the model "chews through usage limits" due to extended thinking loops (Source: r/ClaudeAI).

- We don't know yet the official safety benchmarks for computer use in non-sandboxed enterprise environments.

Currently, 247 verified professionals at companies including Notion, DuckDuckGo, and Quora utilize the platform. See Claude profile

Marcus's Take

Use Claude 4.6 Sonnet for internal documentation analysis or as a secondary coding pair, but keep its "computer use" functions strictly sandboxed. The 50% success rate for unbounded adversarial injections is a non-starter for production agents with shell access. It is a capable reasoning engine for its price, provided you monitor its tendency to burn through tokens during thinking loops. Suggesting a human walk through a car wash suggests the "human-level" reasoning claims are still a few patches away.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

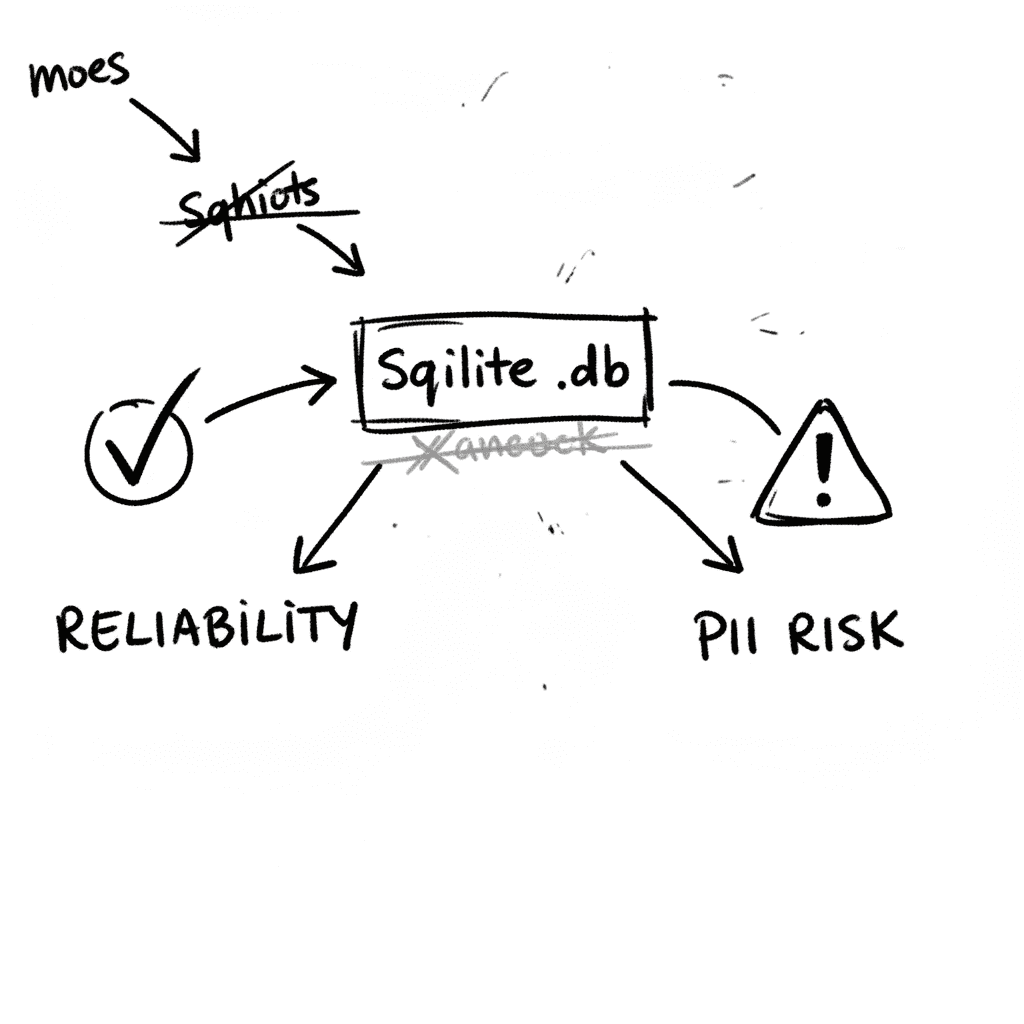

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

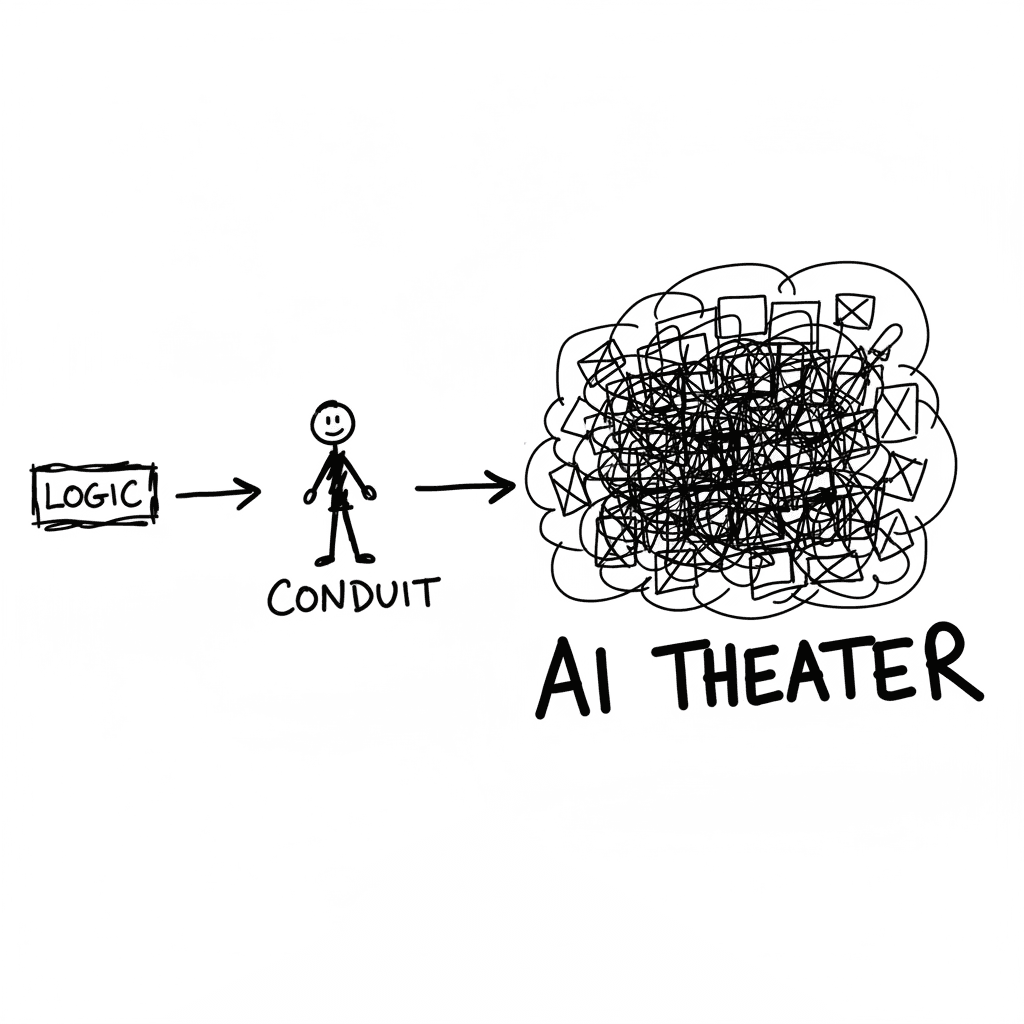

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

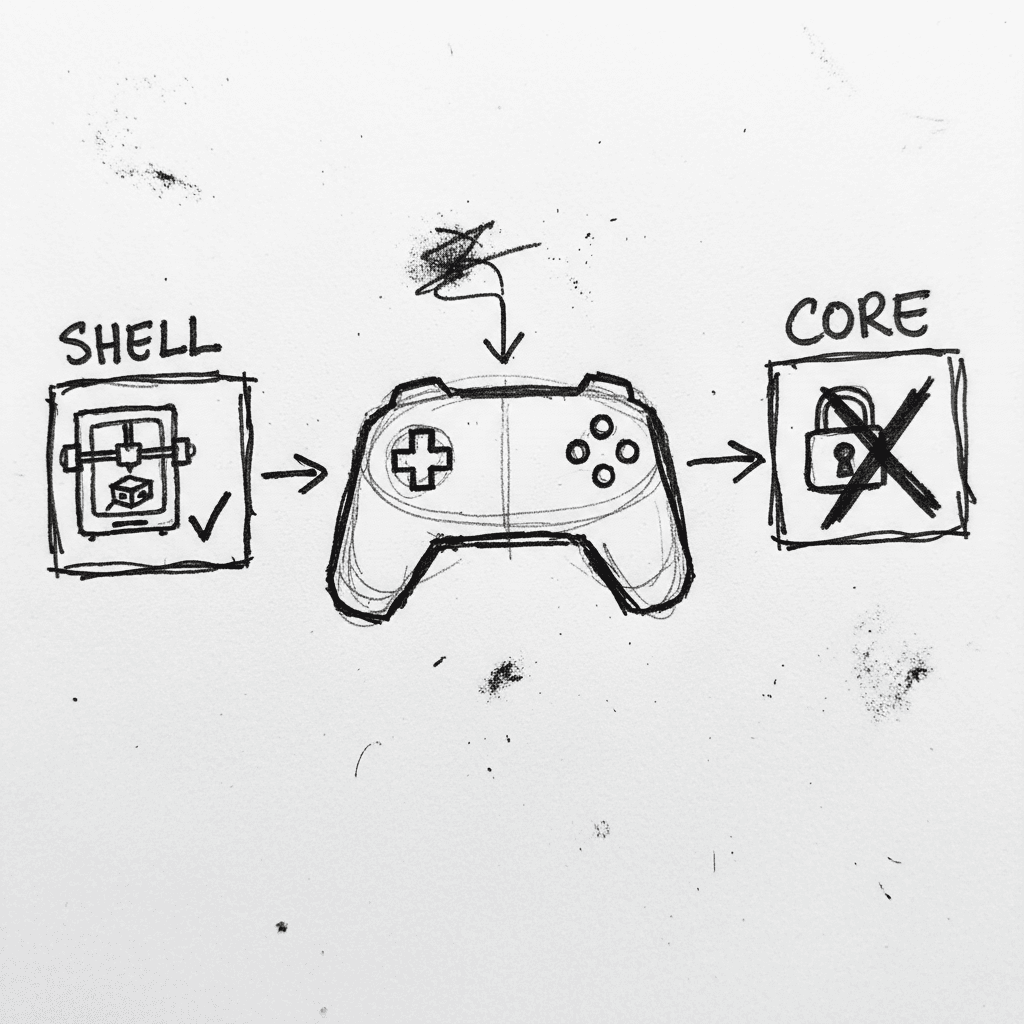

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.