Claude Opus 4.6 Technical Specs and Agentic Benchmarks

Claude Opus 4.6 delivers a 1M token context window and 128K maximum output tokens as part of its February 5 release (Anthropic Release Notes). The model introduces specialized 'Adaptive Thinking' reas

The Pitch

Claude Opus 4.6 delivers a 1M token context window and 128K maximum output tokens as part of its February 5 release (Anthropic Release Notes). The model introduces specialized 'Adaptive Thinking' reasoning and a multi-agent feature called 'Agent Teams' designed for complex engineering workflows.

Under the Hood

The model currently leads the industry in agentic computer-use with a 72.7% score on the OSWorld benchmark (Anthropic System Card). It has already proven its utility in security by identifying over 500 high-severity vulnerabilities in open-source libraries like Ghostscript (The Hacker News).

Anthropic has integrated 'Agent Teams' into Claude Code version 2.1.32, though it requires the CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1 flag to function (GitHub). While the reasoning is sharp, the model suffers from instruction regression where it occasionally ignores mid-conversation amendments and reverts to original plans (HN Comment).

Performance in terminal-only environments remains secondary to the competition; GPT-5.3 Codex maintains a 77.3% score on Terminal-Bench 2.0, whereas Opus 4.6 sits at 65.4% (Terminal-Bench 2.0). Windows users should also note that version 2.1.32 still suffers from persistent Chrome connection errors (Reddit r/ClaudeCode).

Anthropic's usage caps are currently so tight you'd think they were paying for the compute in physical gold bars, with some users throttled after only two complex queries (HN Comment). We don't know yet when 'Agent Teams' will move to a stable release or what the pricing looks like for calls exceeding the 200,000 token threshold.

Marcus's Take

Claude Opus 4.6 is an excellent choice for deep security auditing, but it is too temperamental for autonomous production pipelines. The instruction regression issues mean you cannot yet trust it to manage long-running refactors without constant oversight. Use it for specialized debugging tasks, but keep your primary CI/CD logic on more stable models until the usage limits and plan-following accuracy improve.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

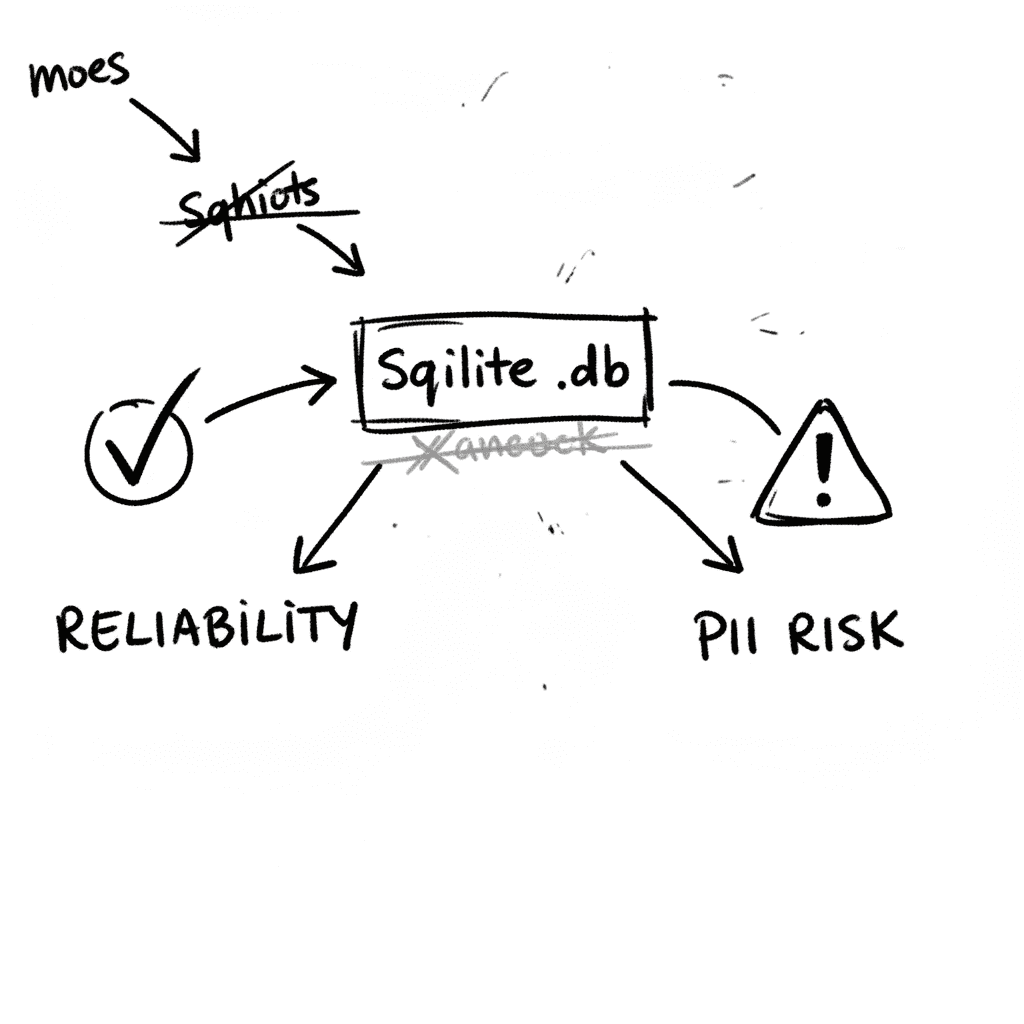

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

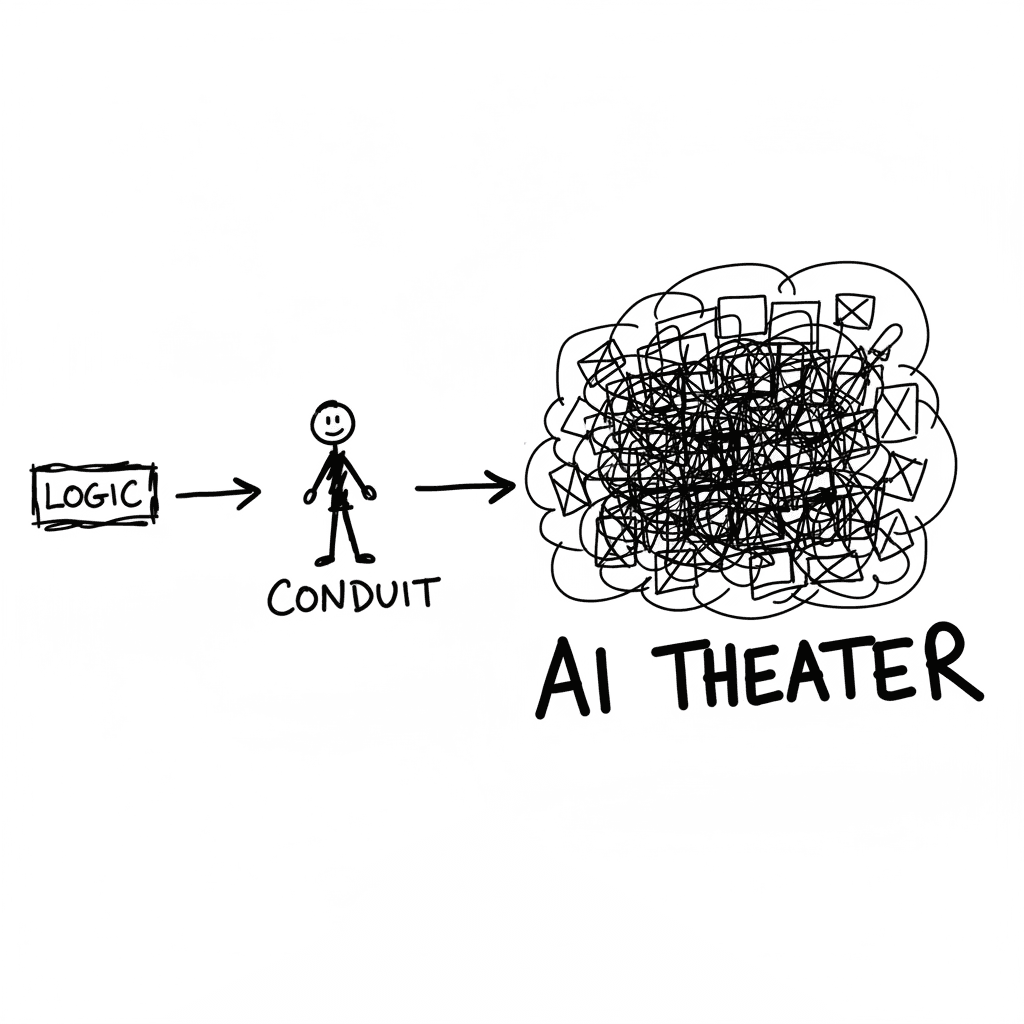

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

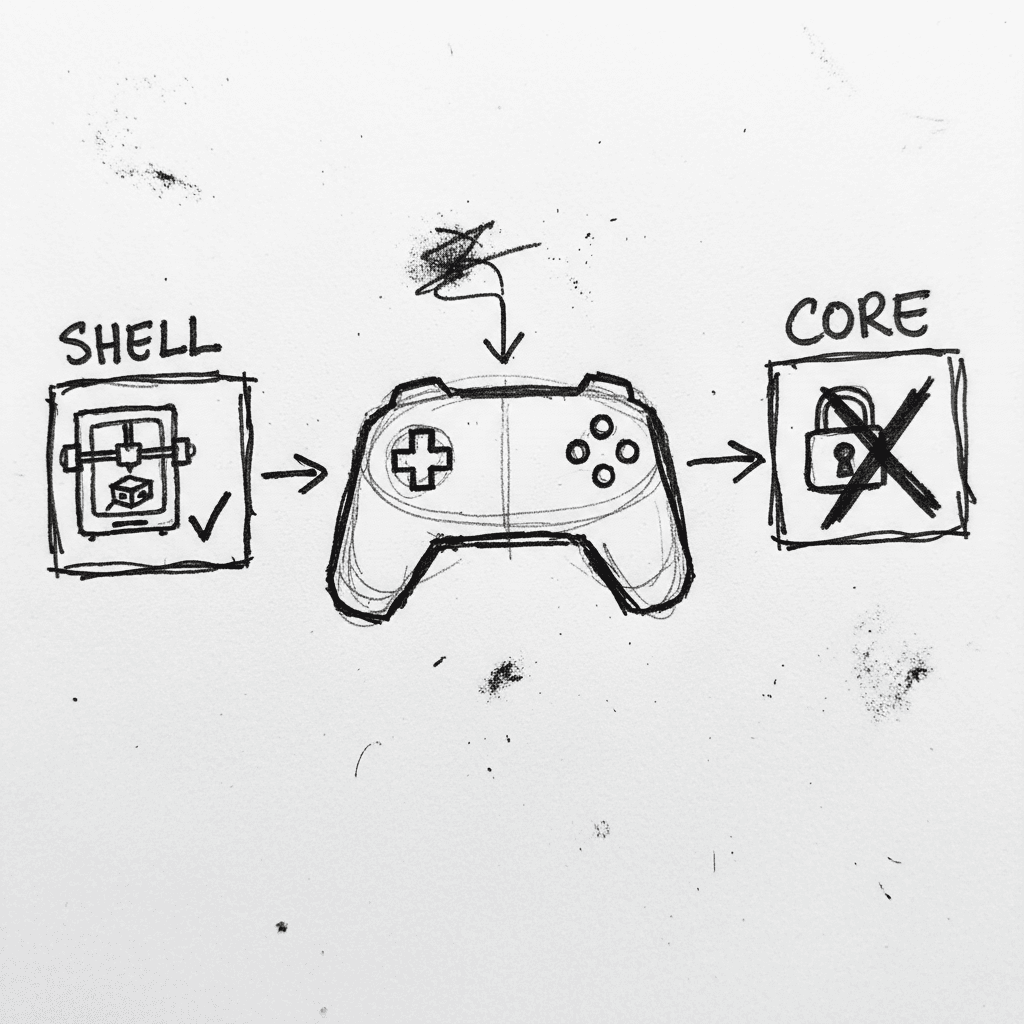

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.