Gemini 3.1 Pro: Reasoning Gains at the Expense of Developer Experience

The most significant technical update is the jump in reasoning performance. Gemini 3.1 Pro achieved a 77.1% score on the ARC-AGI-2 benchmark, moving up from the 31.1% recorded by the 3.0 version (Sour

The Pitch

Google has released Gemini 3.1 Pro, introducing a three-tier thinking architecture designed to balance reasoning depth against inference costs. Currently used by 156 verified users from organizations including Samsung and Discord, this update attempts to match the coding performance of Claude 4.5 Opus while maintaining a significantly lower price point. See Gemini profile

Under the Hood

The most significant technical update is the jump in reasoning performance. Gemini 3.1 Pro achieved a 77.1% score on the ARC-AGI-2 benchmark, moving up from the 31.1% recorded by the 3.0 version (Source: DeepMind Technical Blog). In coding tasks, the model narrowed the gap with Claude 4.5 Opus, reaching 80.6% on SWE-Bench (Source: Artificial Analysis).

The pricing remains competitive for teams managing tight infrastructure budgets. At $2 per million input tokens and $12 per million output tokens for contexts under 200k, it is approximately half the price of Claude 4.5 Opus (Source: AI News / Google Cloud Docs). Additionally, the max output token limit has been raised to 65,536, and the file upload limit in Vertex AI is now 100MB (Source: Google Model Card).

However, the "High" thinking mode—branded as Deep Think Mini—introduces a verbosity problem. The model generates a high volume of internal reasoning tokens that can lead to unexpected billing spikes (Source: Artificial Analysis). Gemini 3.1 Pro has the gift of the gab, but unfortunately, you're the one paying for the long-windedness.

Integration stability remains a primary concern for engineering leads. Developers report frequent "endless loop" bugs in VS Code, where the model fails to complete workflows that are handled reliably by Claude 4.5 (Source: GitHub Issue #295707). Furthermore, the knowledge cutoff is stuck in January 2025, making it less effective for referencing 2026 technical documentation (Source: LLM-Stats).

We don't know yet when the non-preview API endpoints will reach General Availability. We also lack detailed data on how token consumption rates vary between the "Medium" and "High" thinking modes when processing long-context prompts.

Marcus's Take

Gemini 3.1 Pro is a calculated trade-off between intelligence and stability. While the reasoning gains are documented, the IDE integration is too brittle for mission-critical development, and the 2025 knowledge cutoff is a genuine hindrance in 2026. Use it as a secondary model for cost-sensitive batch processing or high-volume reasoning tasks. For your primary production code and active IDE workflows, stick with Claude 4.5 Opus until Google fixes the stability loops.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

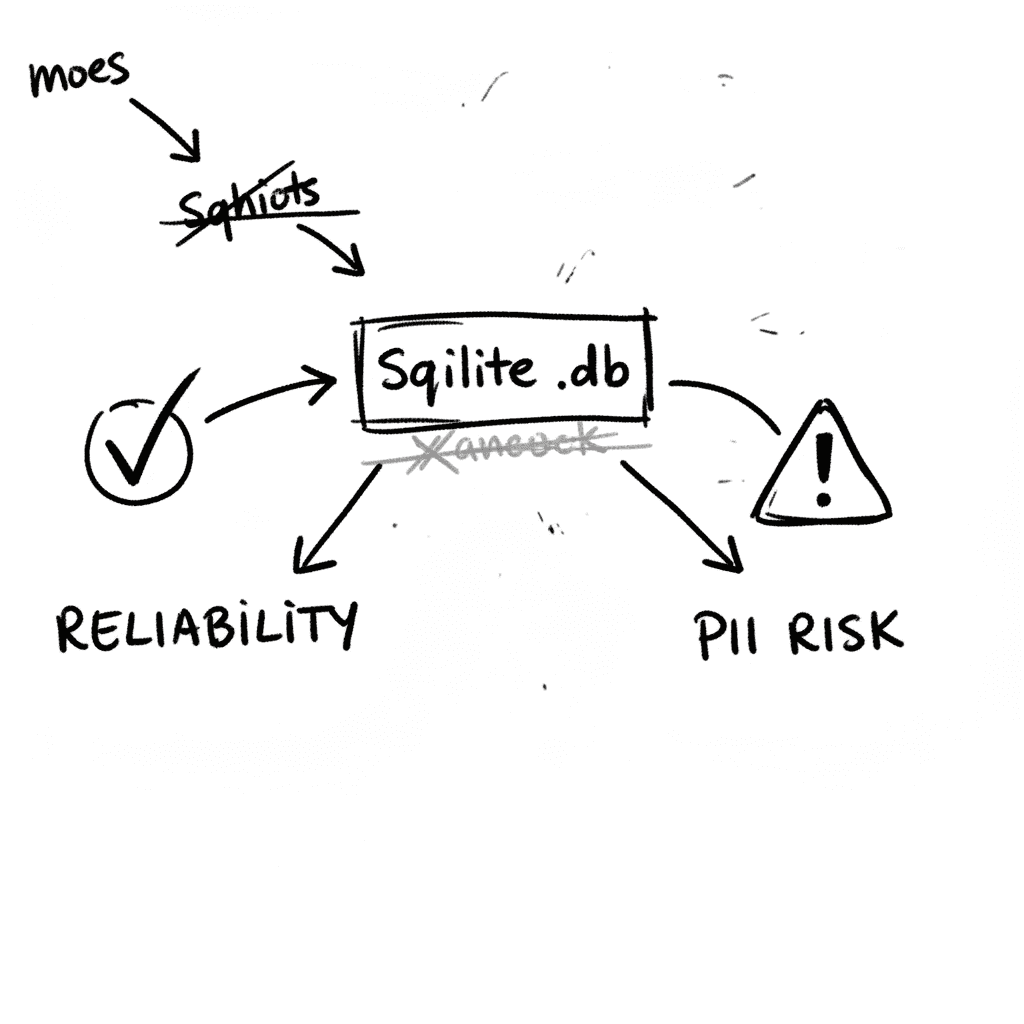

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

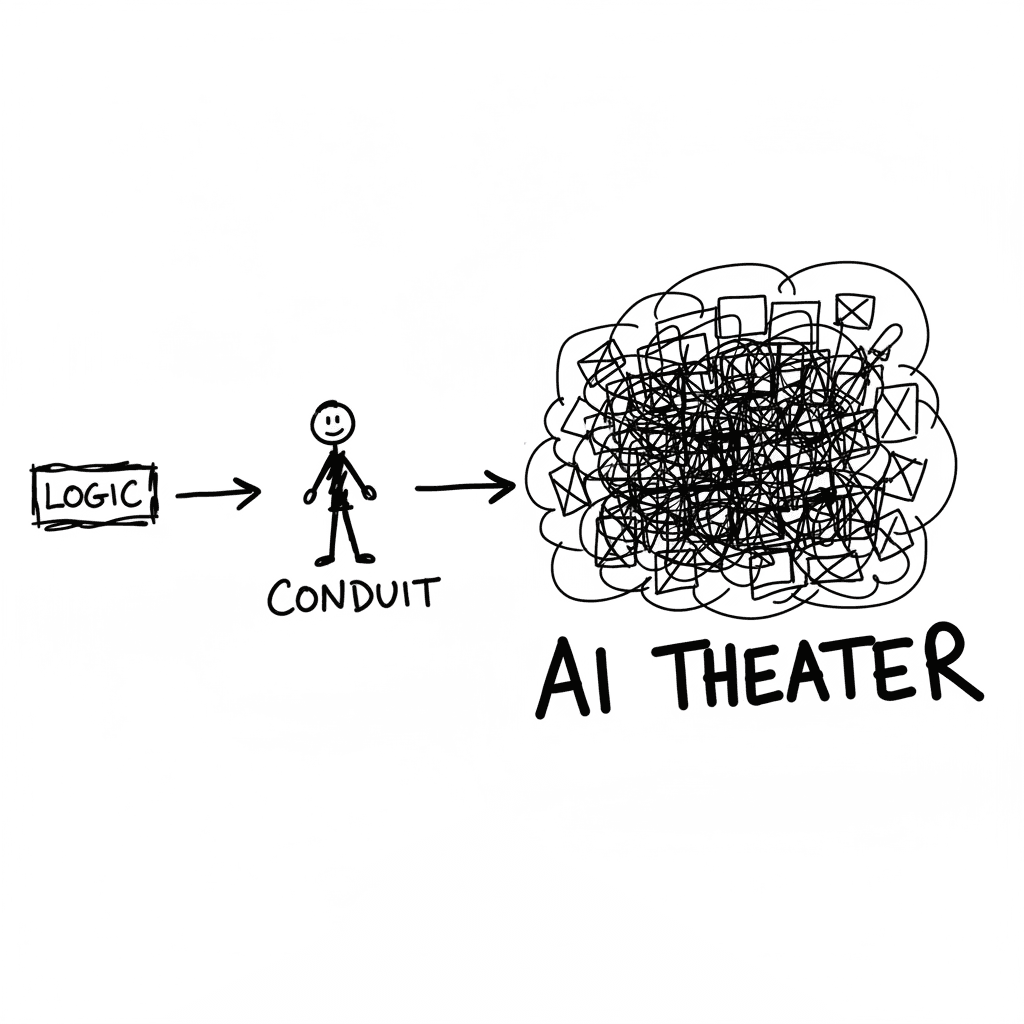

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

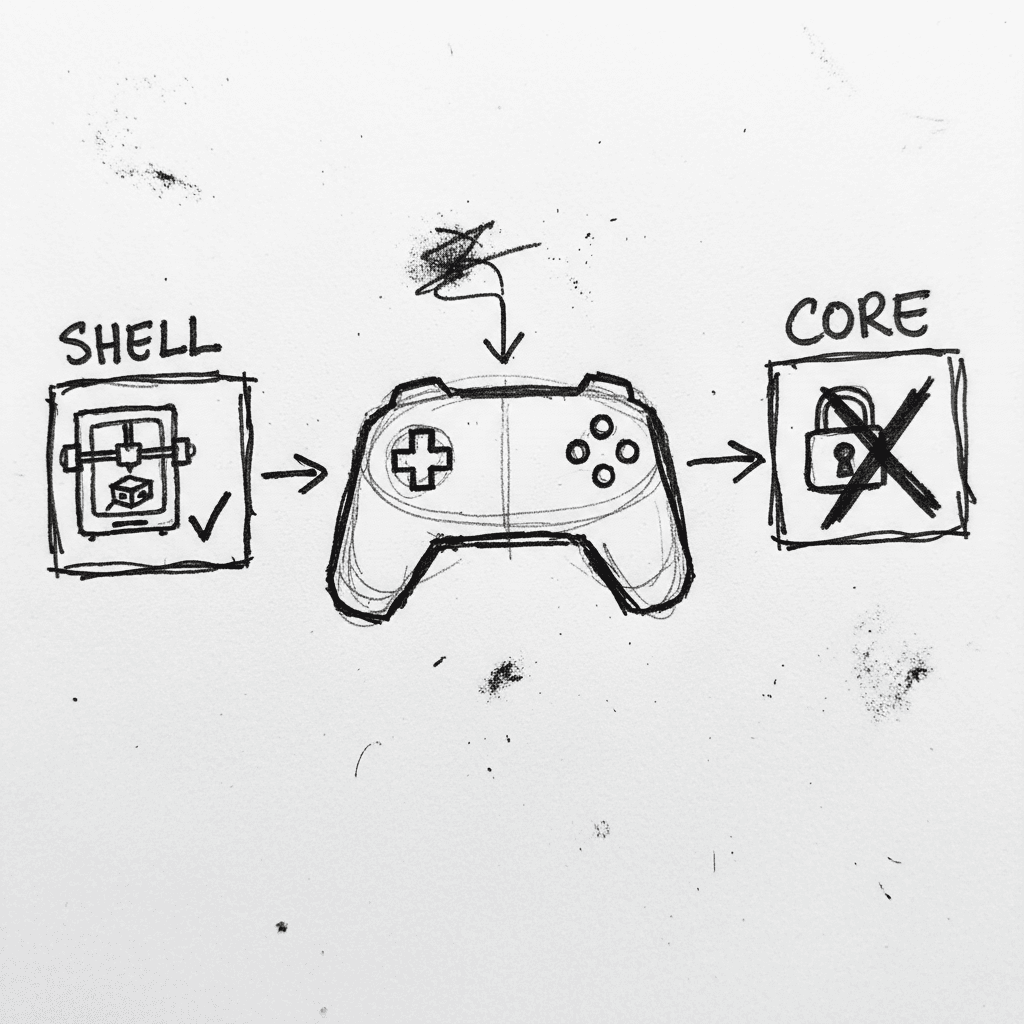

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.