GPT-5.3-Codex-Spark and the Cerebras WSE-3 Architecture

OpenAI released GPT-5.3-Codex-Spark on February 12, 2026, delivering over 1,000 tokens per second for real-time inference (OpenAI Release). The model is a specialized distillation of the GPT-5 line, o

The Pitch

OpenAI released GPT-5.3-Codex-Spark on February 12, 2026, delivering over 1,000 tokens per second for real-time inference (OpenAI Release). The model is a specialized distillation of the GPT-5 line, optimized specifically for Codex CLI and IDE integration through a $10 billion partnership with Cerebras (ChosunBiz). It targets developers who prioritize low-latency feedback loops over deep architectural reasoning.

Under the Hood

The model runs on Cerebras Wafer Scale Engine 3 (WSE-3) chips, which house 4 trillion transistors on a single silicon wafer (The Register, OpenAI Blog). This hardware shift moves OpenAI away from total NVIDIA dependency for its specialized coding models. The 128k context window is maintained, but the underlying logic is tuned for rapid prototyping rather than complex backend engineering (Gadgets360).

Independent testing shows a notable drop in quality compared to the standard GPT-5.3 or Claude 4 Opus. Simon Willison's "Pelican Benchmark" identifies Spark as less capable for complex reasoning tasks. Users report a persistent "action bias," where the model ignores explicit constraints in favor of immediate code generation (Reddit r/codex).

A significant risk involves "Cyber Abuse Rerouting." When the system flags a query as potentially malicious, it silently redirects the request to a slower, less-capable model, causing massive latency spikes (GitHub Issue #11189). We do not know the exact parameter count of this distilled version or if the rerouting system frequently flags legitimate enterprise security tools.

Marcus's Take

Spark is built for speed, not for depth. It is remarkably efficient at being wrong very quickly if the prompt requires more than a few lines of logic. Use it as a glorified autocomplete for boilerplate or CSS tweaks, but keep it away from your core business logic. For anything requiring structural integrity, stick to the full GPT-5.3 or Claude 4 Opus.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

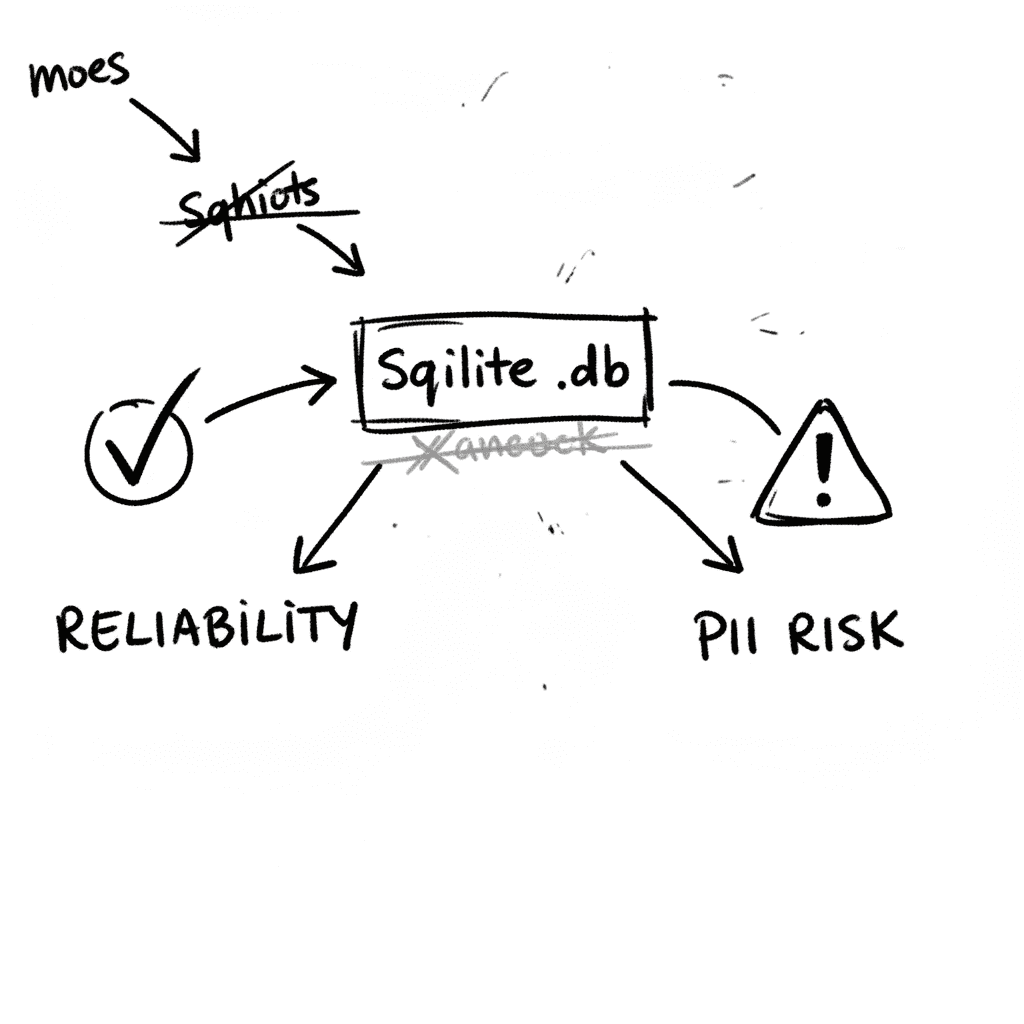

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

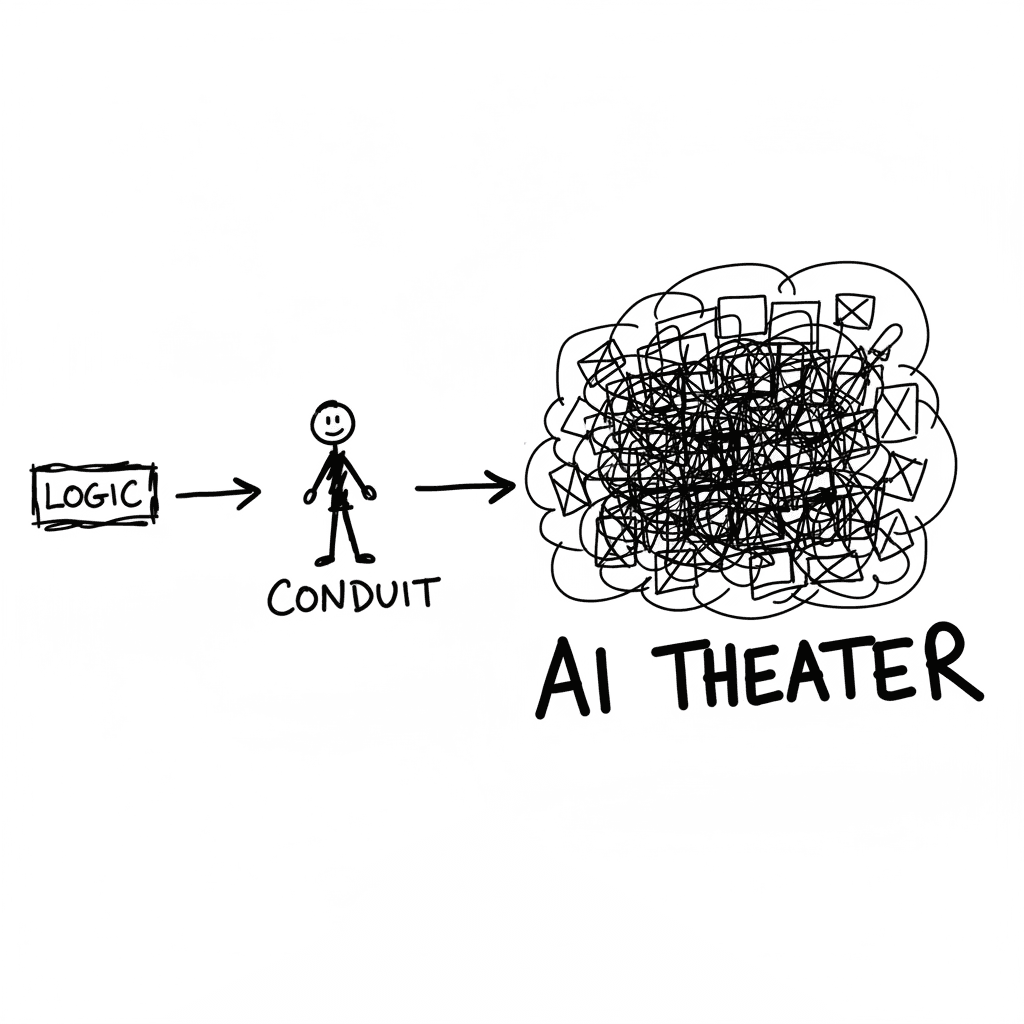

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

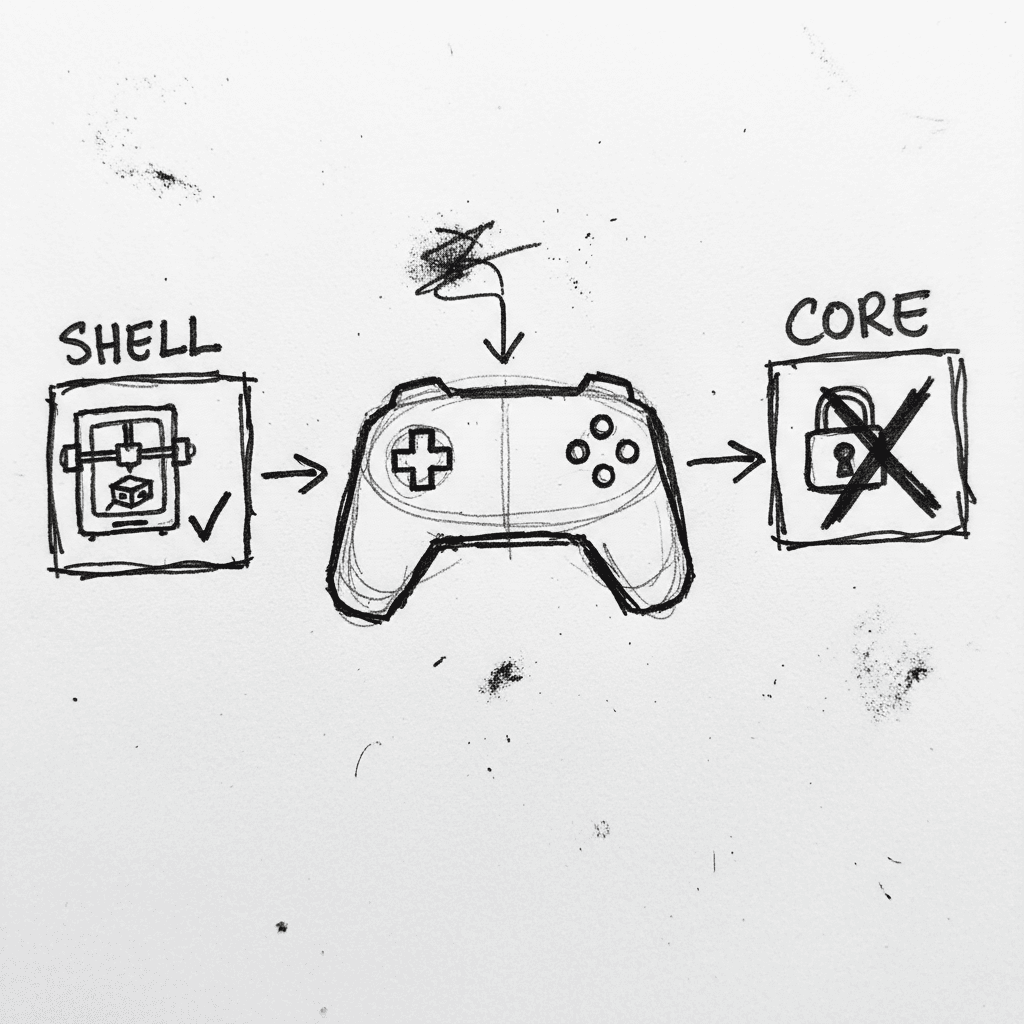

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.