Numerical Regression in Apple MLX on A18 Pro Silicon

Apple’s MLX framework is designed to optimise local inference by allowing LLMs to run directly on the Neural Engine and GPU with unified memory access. Despite the iPhone 16 Pro Max being marketed as

The Pitch

Apple’s MLX framework is designed to optimise local inference by allowing LLMs to run directly on the Neural Engine and GPU with unified memory access. Despite the iPhone 16 Pro Max being marketed as the definitive hardware for Apple Intelligence, developers are currently grappling with severe output degradation on the A18 Pro chip. (Source: GitHub ml-explore)

Under the Hood

The A18 Pro chip produces "gibberish" outputs when executing local LLM workloads via MLX, a flaw not present in the older iPhone 15 Pro or the current iPhone 17. (Source: GitHub ml-explore).

Detailed debugging reveals that these numerical inconsistencies are tied to floating-point (FP16) instability within the silicon's handling of tensor operations. (Source: Aditya Karnam/GitConnected).

Quantised 4-bit (Q4_K_M) models offer slightly more determinism, but they fail to fully circumvent the underlying hardware issues. (Source: Aditya Karnam/GitConnected).

Related regressions have appeared in CoreML tensor layouts and pointer arithmetic since the iOS 26.1 Beta. (Source: Apple Developer Forums #8007).

This suggests a significant misalignment between the Metal compiler and the A18 Pro’s specific NPU/GPU architecture.

The issue is reported as persistent across various LLM architectures, including Stable Diffusion UNet layers. (Source: Rafael Costa Blog).

We do not know yet if this is a fixable kernel bug in iOS 26.x or a physical hardware defect requiring a recall.

Apple has not issued an official statement regarding the percentage of affected A18 Pro units.

While some users on Hacker News claim it affects only a minority of devices, the lack of a deterministic fix is stalling local AI deployment.

Marcus's Take

The A18 Pro is a liability for any backend engineer looking to move LLM inference to the edge. If your application handles medical or financial data where "order of magnitude" errors equate to catastrophic failure, avoid this device entirely.

The fact that the iPhone 17 and M4 Max chips remain unaffected suggests the 16 Pro Max is a silicon outlier with a broken numerical pipeline.

Move your production targets to the iPhone 17 or M-series iPads until Apple admits whether this requires a firmware throttle or a replacement programme.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

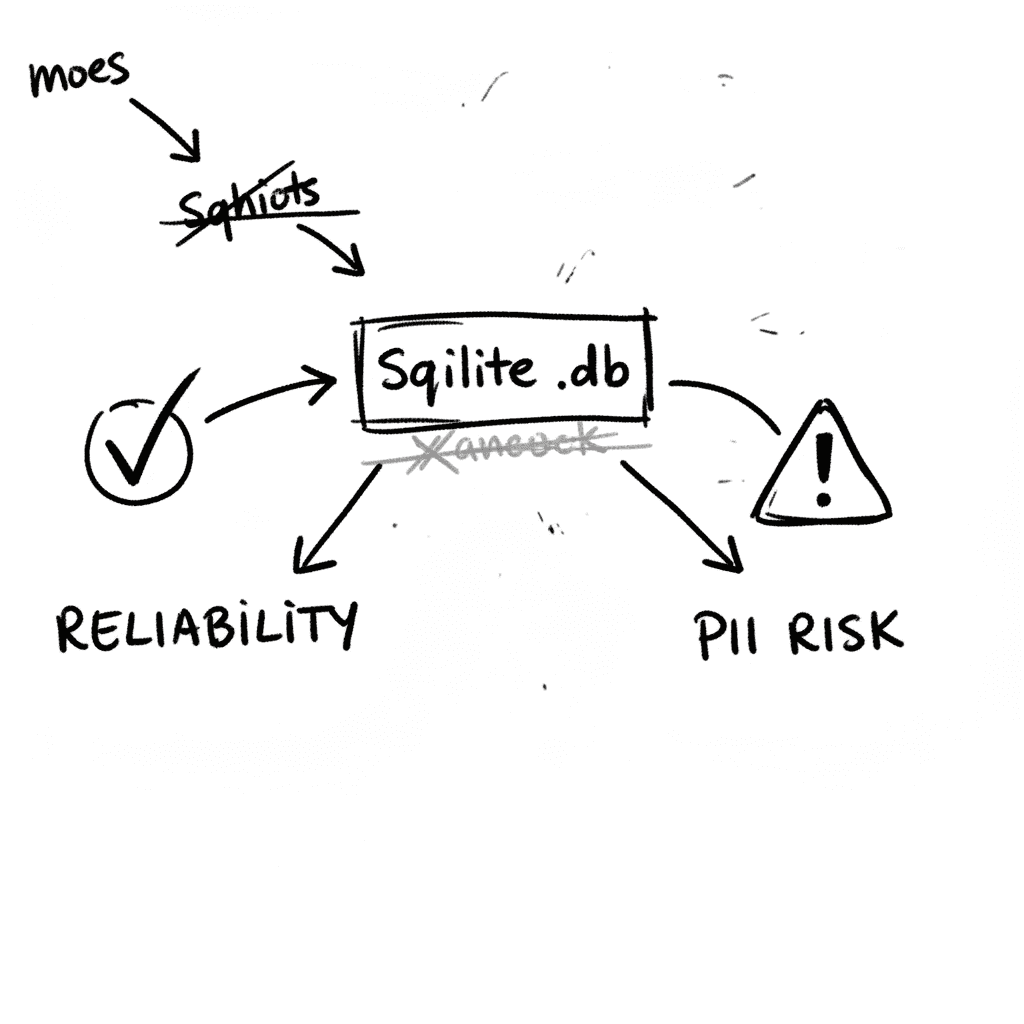

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

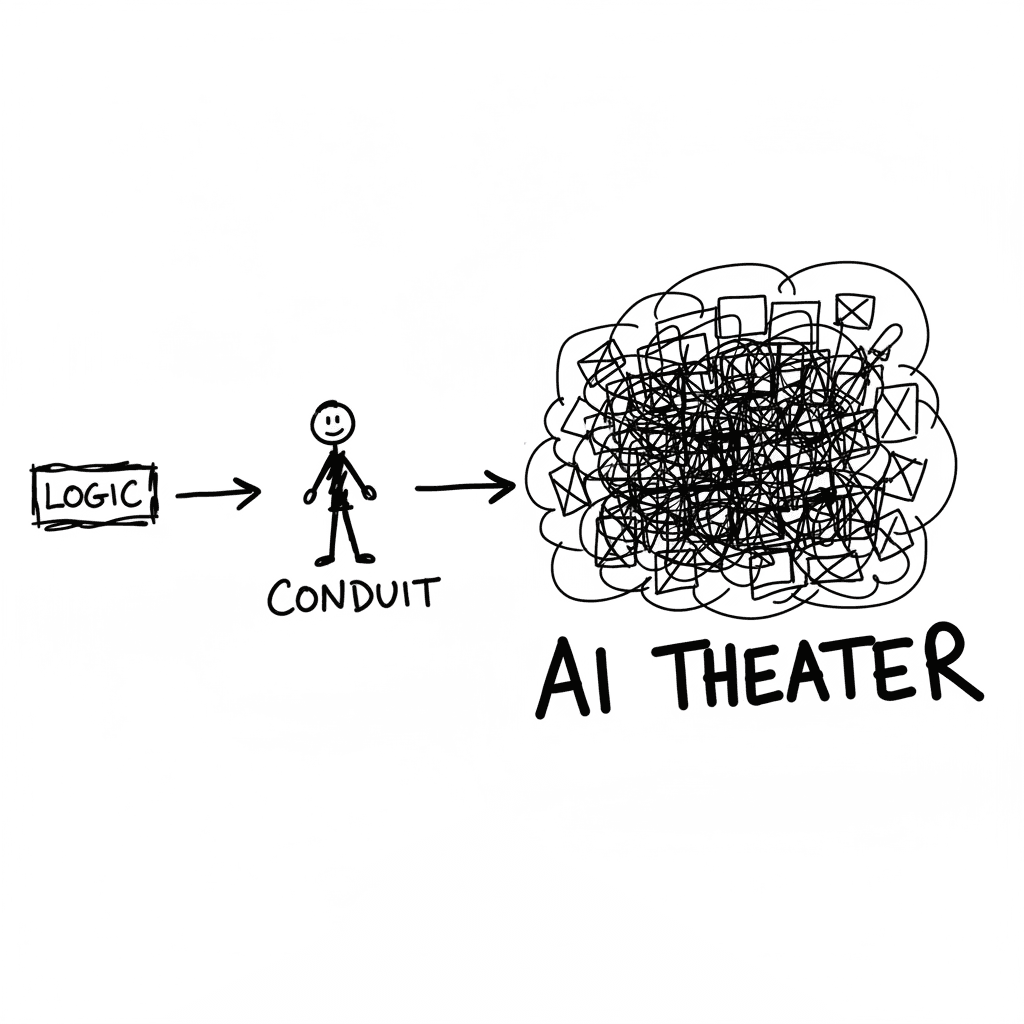

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

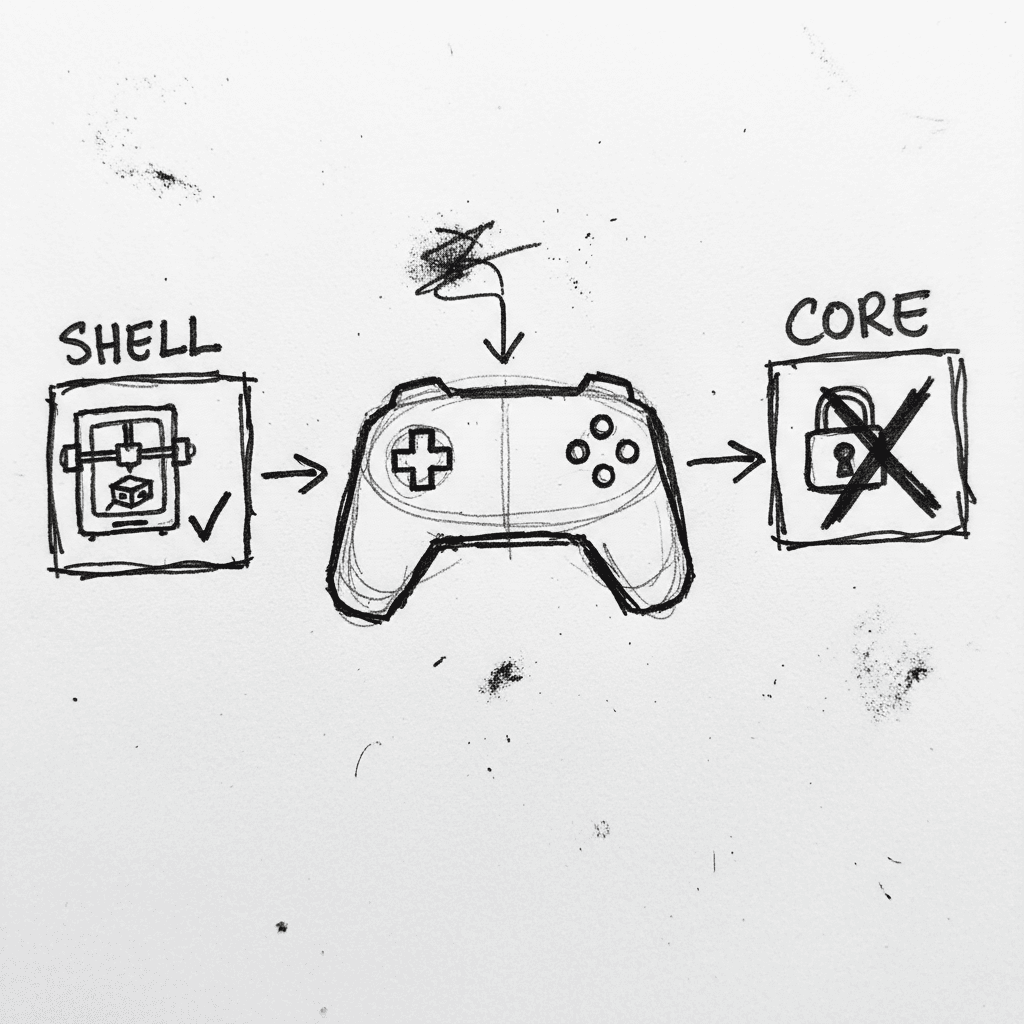

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.