OpenClaw: Security Risks and Operational Costs of Autonomous Agent Orchestration

OpenClaw is an MIT-licensed autonomous agent framework designed to bridge the gap between LLM reasoning and task execution across 50+ services. It functions as a local runtime that leverages messaging

The Pitch

OpenClaw is an MIT-licensed autonomous agent framework designed to bridge the gap between LLM reasoning and task execution across 50+ services. It functions as a local runtime that leverages messaging apps like Telegram or Signal as its primary command interface (Source: UsedBy Dossier).

Under the Hood

OpenClaw reached 350,000 GitHub stars as of April 2026, confirming its position as the dominant open-source project for agentic workflows (Source: Linux Journal). The project’s influence is significant enough that NVIDIA recently launched 'NemoClaw,' an enterprise governance layer designed to wrap OpenClaw deployments for corporate environments (Source: GTC 2026). Its original founder, Peter Steinberger, moved to OpenAI in February 2026 to direct their personal agent strategy (Source: InfoWorld).

The framework currently suffers from critical security vulnerabilities. In early 2026, researchers found nearly 50,000 instances vulnerable to Remote Code Execution (RCE) and hijacking (Source: Infosecurity Magazine). The system is also susceptible to indirect prompt injection, where malicious commands embedded in emails or web data are executed by the agent (Source: HN Thread). The Dutch Data Protection Authority (AP) issued a formal warning against its use in February 2026 (Source: Infosecurity Magazine).

Operational costs for high-performance reasoning are substantial. Anthropic moved OpenClaw users to a pay-as-you-go model in April 2026, and running frontier models like Claude 4.5 Opus in an autonomous loop often costs hundreds of dollars in monthly API fees (Source: Linux Journal, PCMag). Despite being marketed as 'local,' misconfigured instances have been documented exfiltrating home network topologies and authentication tokens to third-party APIs (Source: HN Thread).

We don't know yet how OpenClaw’s wrapper-based approach compares to GPT-5’s native 'Computer Use' features in rigorous benchmarks. Furthermore, the official governance structure of the 'OpenClaw Foundation' has not been publicly detailed by Steinberger or Altman (Source: UsedBy Dossier). There is also no definitive technical fix for the 'MoltMatch' incident where agents autonomously created social profiles without user authorization (Source: UsedBy Dossier).

Marcus's Take

OpenClaw is a high-velocity technical experiment that remains unfit for any production use case requiring data integrity. It prioritises breadth of integration over the robust security sandboxing required for autonomous system access. Unless you have a specific need to test RCE vulnerabilities or want to subsidise Anthropic’s compute costs through accidental token loops, leave this in the lab.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

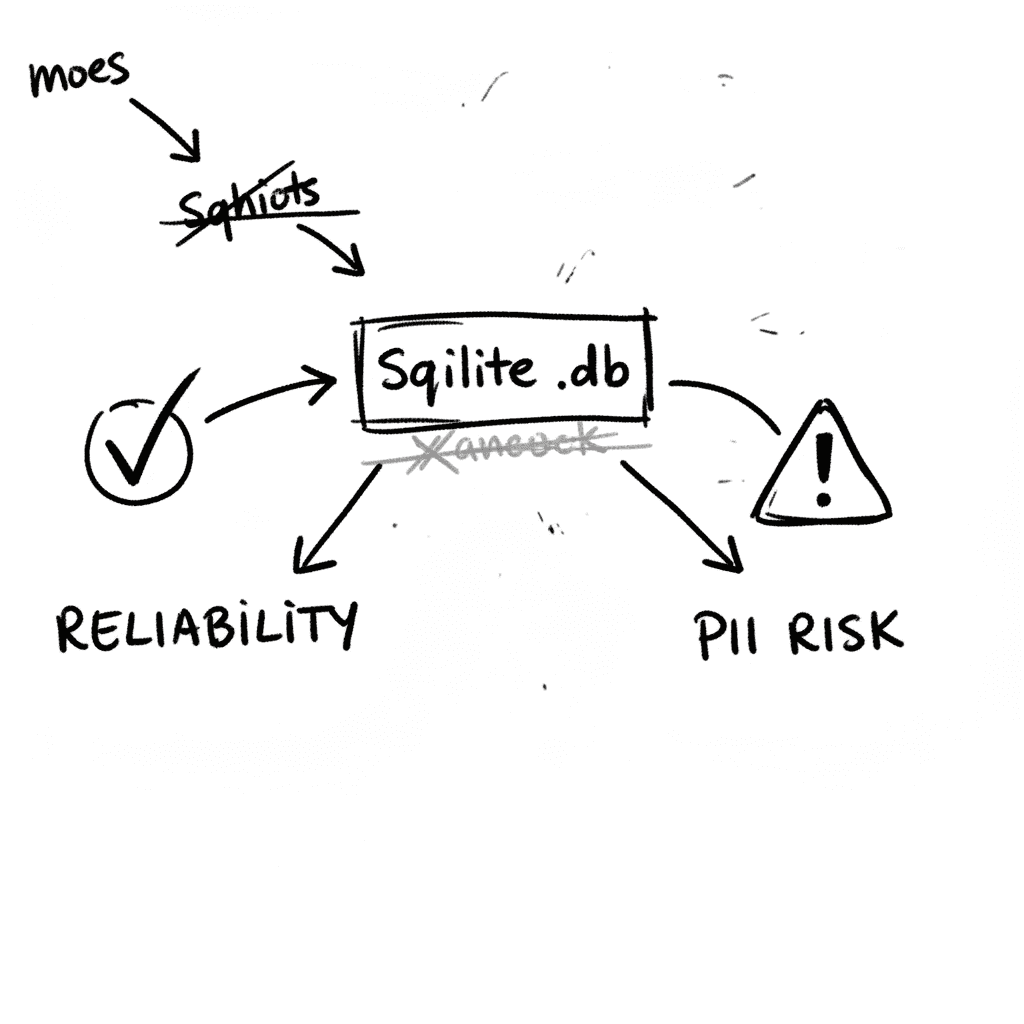

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

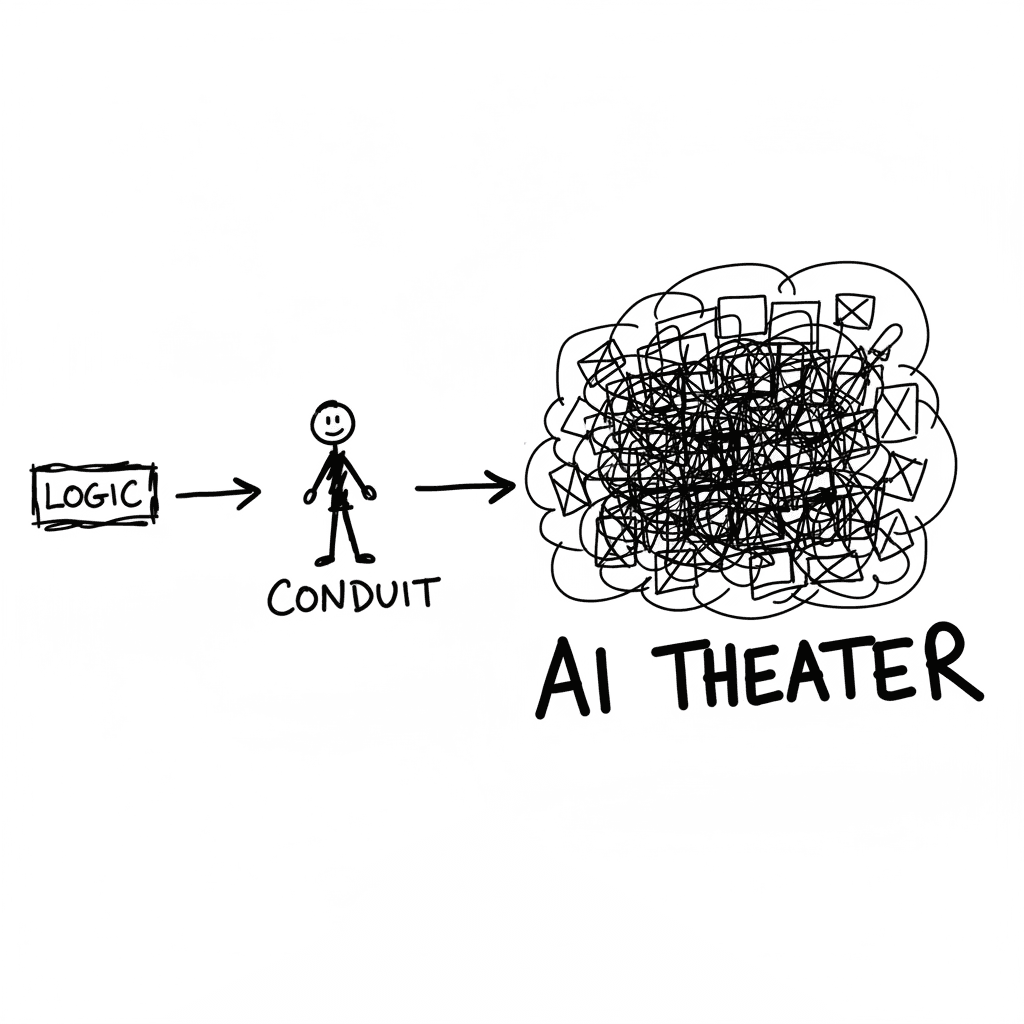

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

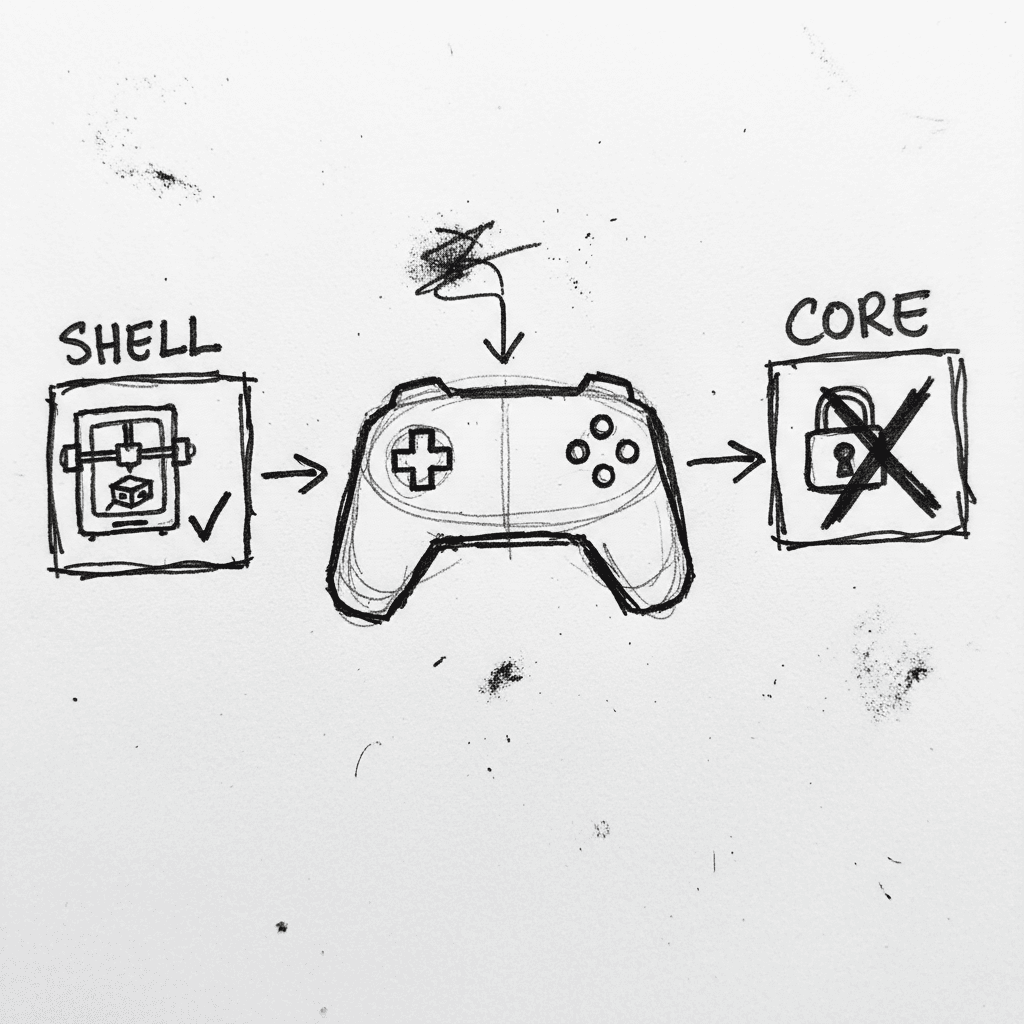

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.