Technical Analysis of OpenAI gpt-image-2 Rendering Engine

OpenAI launched ChatGPT Images 2.0 (gpt-image-2) on April 21, replacing the gpt-image-1.5 model with a focus on reasoning-driven spatial layouts. The system shifts away from simple pixel prediction to

The Pitch

OpenAI launched ChatGPT Images 2.0 (gpt-image-2) on April 21, replacing the gpt-image-1.5 model with a focus on reasoning-driven spatial layouts. The system shifts away from simple pixel prediction toward a "Thinking" mode that handles complex infographics, UI mockups, and multilingual text with high precision. (Source: The New Stack, April 2026)

Under the Hood

The core of this update is the "Thinking" mode, which allows the model to reason through layouts and verify web data before the first pixel is rendered. (Source: VentureBeat) This architecture delivers 99% text accuracy, solving the long-standing issues with non-Latin scripts such as Hindi, Bengali, Japanese, and Korean. (Source: Gadgets 360)

Integration with C2PA standards for provenance is now native, ensuring all outputs meet current industry watermarking requirements. (Source: VentureBeat) However, this precision comes at a literal cost. API pricing is now token-based at $8/1M input and $30/1M output tokens. (Source: The Decoder)

A standard 1024x1024 generation costs approximately $0.21, while advanced "Thinking" generations at 2K resolution can spike to $0.40 per image. (Source: Simon Willison’s Weblog) For high-volume production environments, these unit costs are significant enough to make a CFO reach for the beta blockers.

While the model leads in text and layout adherence, it still struggles with the "uncanny valley" aesthetic in human subjects. Competitors like Google’s Gemini NB2 remain the preferred choice for anatomical precision and photorealism. (Source: Startup Fortune) There is also a notable lack of transparency regarding the hardware requirements for enterprise local deployment.

Furthermore, the recent shutdown of the Sora team leaves the future of integrated video-image workflows at OpenAI an open question. Community sentiment remains lukewarm on the ethics front, as there is still no clear framework for compensating creators whose work trained the model. (Source: Hacker News)

Marcus's Take

If your stack requires automated UI prototyping or technical infographics, gpt-image-2 is the first model reliable enough for production pipelines. The text rendering is finally dependable for global deployments in non-Latin markets. However, for B2C applications involving human portraits or high-volume generation, the 40-cent-per-image price tag and the "uncanny" aesthetic make it a poor choice. Use it for complex internal documentation and UI mockups; stick to Gemini 2.5 or NB2 for high-fidelity photorealism.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

The Atomic Semi Technical Assessment: Portable Silicon Fabrication in 2026

Atomic Semi is a venture-backed startup co-founded by Sam Zeloof and industry veteran Jim Keller that aims to decentralise semiconductor manufacturing. By building small, portable "mini-fabs," the com

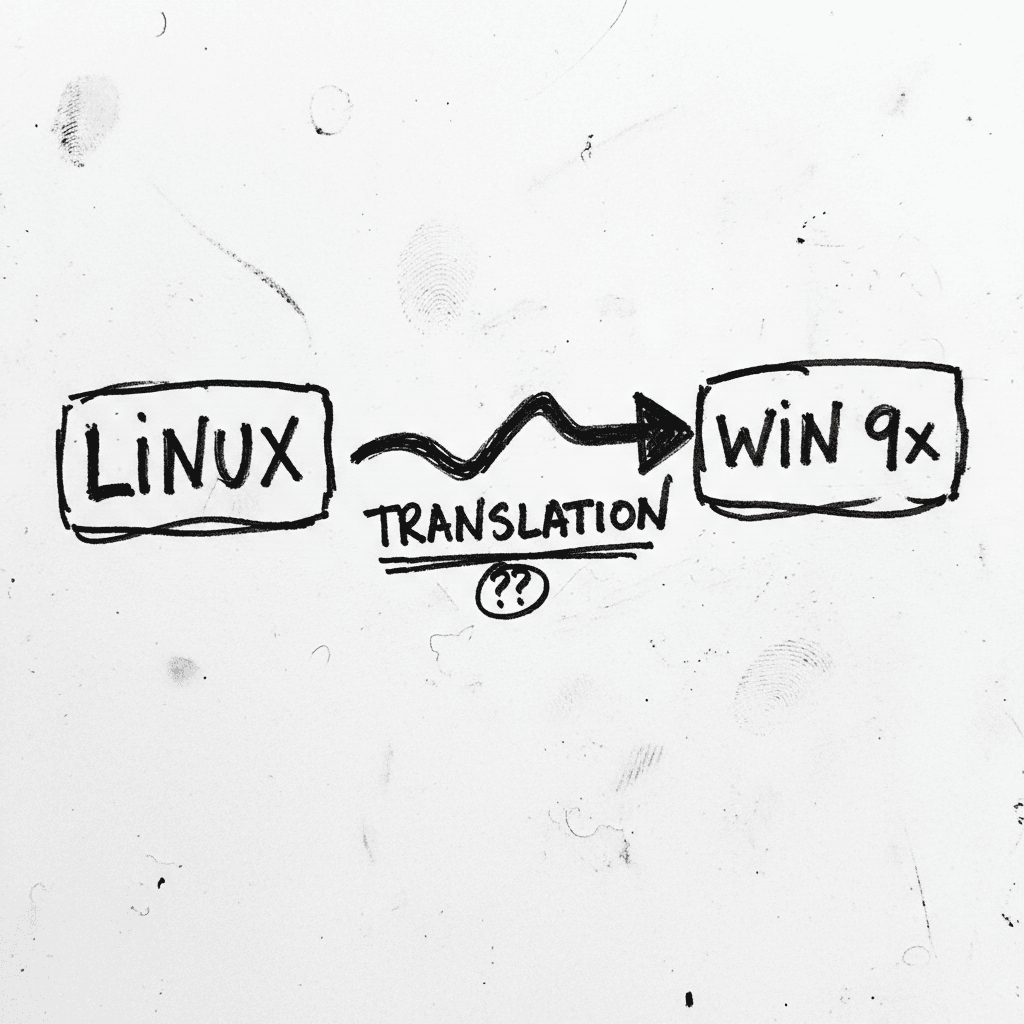

W9SL: Syscall Translation for Legacy Windows 9x Kernels

Windows 9x Subsystem for Linux (W9SL) is a syscall translation layer that allows unmodified Linux ELF binaries to run on Windows 95, 98, and Me without virtualization. It mimics the architecture of th

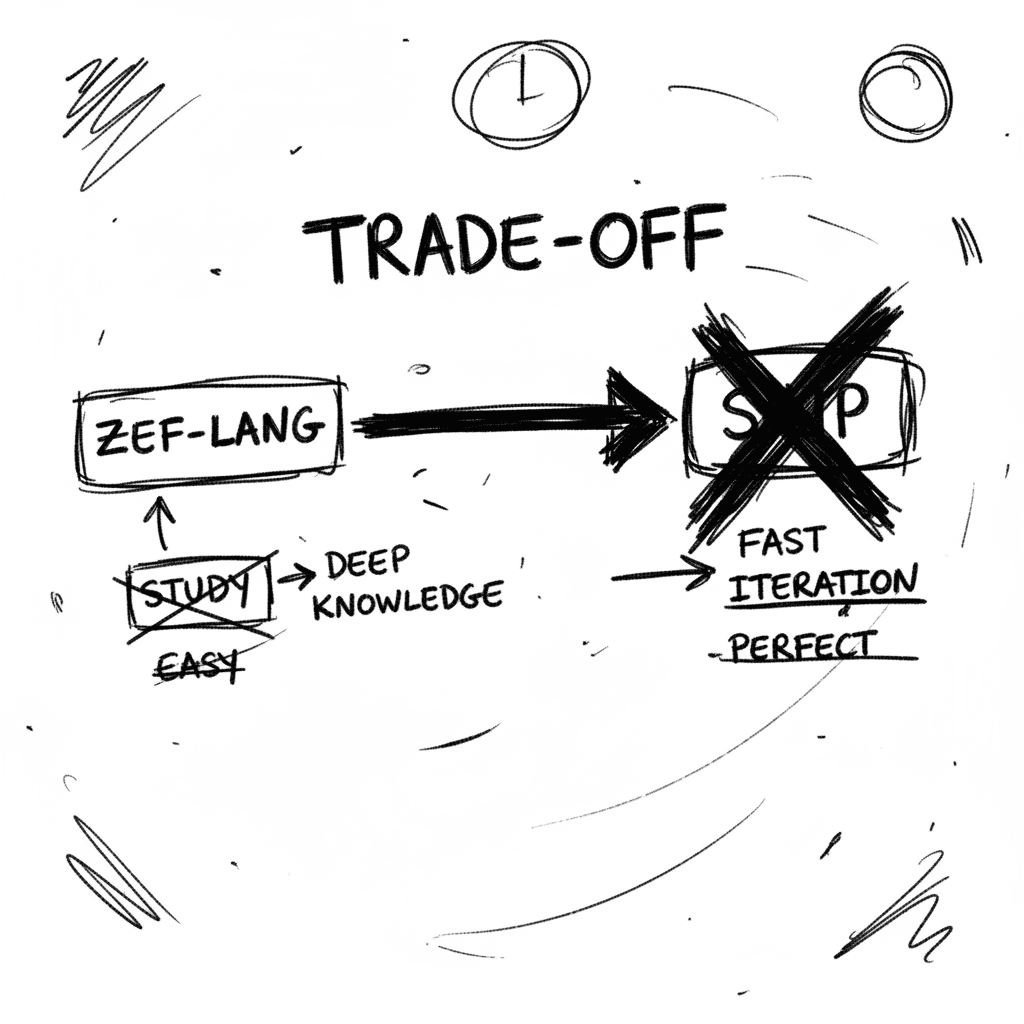

Zef-lang: Technical Architecture and Optimization Path

Zef-lang is an architectural blueprint for dynamic language interpreters designed by Filip Pizlo. It serves as a pedagogical implementation of high-performance VM techniques including hidden classes a

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.