The Thermal Reality of Orbital Data Centers

Axiom Space successfully launched its first two Orbital Data Center (ODC) nodes on January 11, 2026, marking the first serious attempt to move high-compute clusters into low-Earth orbit. The project a

Axiom Space successfully launched its first two Orbital Data Center (ODC) nodes on January 11, 2026, marking the first serious attempt to move high-compute clusters into low-Earth orbit. The project aims to bypass terrestrial power and water constraints by utilizing solar energy for training GPT-5 class models (Source: Axiom Space Official Release, Jan 2026).

The Pitch

The marketing narrative focuses on "free cooling" from the vacuum of space and the elimination of terrestrial environmental impact. Following Starcloud’s successful in-orbit training of a small-scale model in late 2025, proponents argue that orbital clusters are the only way to scale compute without straining Earth’s power grids (Source: EnkiAI Report 2026).

Under the Hood

The hardware foundation for this shift relies on AMD’s Versal VE2302 "Space-Grade" SoC, which achieved qualification for on-board AI inferencing in January 2026 (Source: AMD Aerospace & Defense, Jan 2026). While this allows for localized data processing, its performance significantly lags behind terrestrial Blackwell or X100 architectures.

The "free cooling" claim fails to account for basic thermodynamics. Space is a vacuum and acts as a near-perfect thermos; heat cannot be conducted or convected away. According to the Stefan-Boltzmann law, heat can only be dissipated via radiation (Source: HN Comment Analysis / EnkiAI 2026).

To support the megawatt-scale power requirements of training Claude 4.5 Opus or GPT-5, an ODC would require square kilometers of radiator surface area to prevent chip meltdown. The European Commission’s ASCEND study confirmed that while carbon reduction is possible, megawatt scaling requires complex robotic assembly in orbit—a technology still in its infancy (Source: Thales Alenia Space / Horizon CORDIS 2025).

We also face a significant "stranded asset" risk. Hardware launched today is fixed in orbit, while terrestrial clusters are upgraded in six-month cycles. By the time a megawatt ODC completes its commissioning phase, its silicon will likely be two generations behind its ground-based counterparts.

Furthermore, we don't know yet how high-energy cosmic rays will affect non-Rad-hardened HBM3E memory during sustained training runs. The specific launch manifest for rumored Starship-based megawatt projects also remains unconfirmed (Source: UsedBy Dossier).

Marcus's Take

ODCs are currently a triumph of PR over physics for anyone looking to train foundation models. While the AMD VE2302 is fine for low-power edge inference, the thermal bottleneck makes training a GPT-5 competitor in orbit a mathematical impossibility without a radiator the size of a small town. You cannot "free cool" a data center when you are trapped inside a thermos. Skip the orbital hype and keep your clusters on the ground where the cooling loops actually work.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

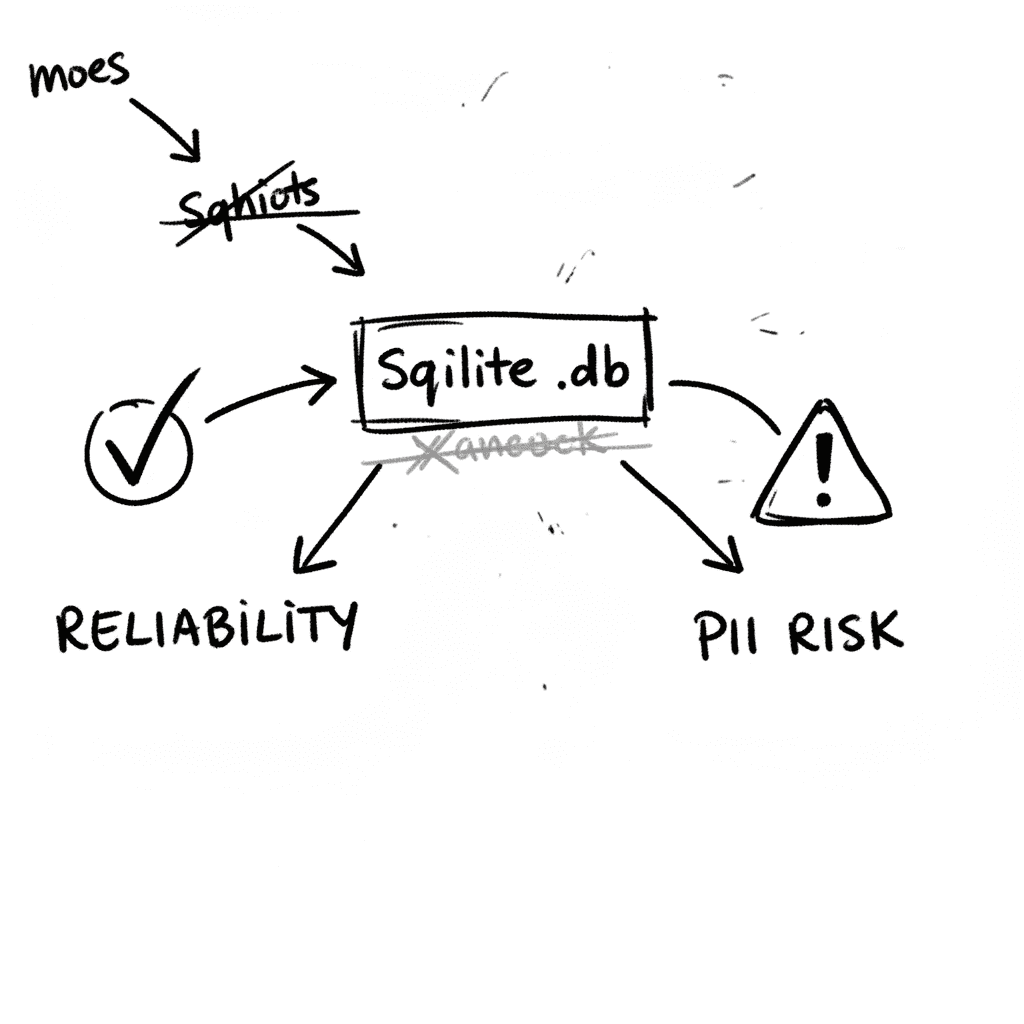

SQLite 3.53.1: Technical Reliability vs. Compliance Governance

SQLite is the industry’s default embedded database, now officially designated as a Recommended Storage Format (RSF) by the U.S. Library of Congress (Source: loc.gov RFS 2026). It remains the most depl

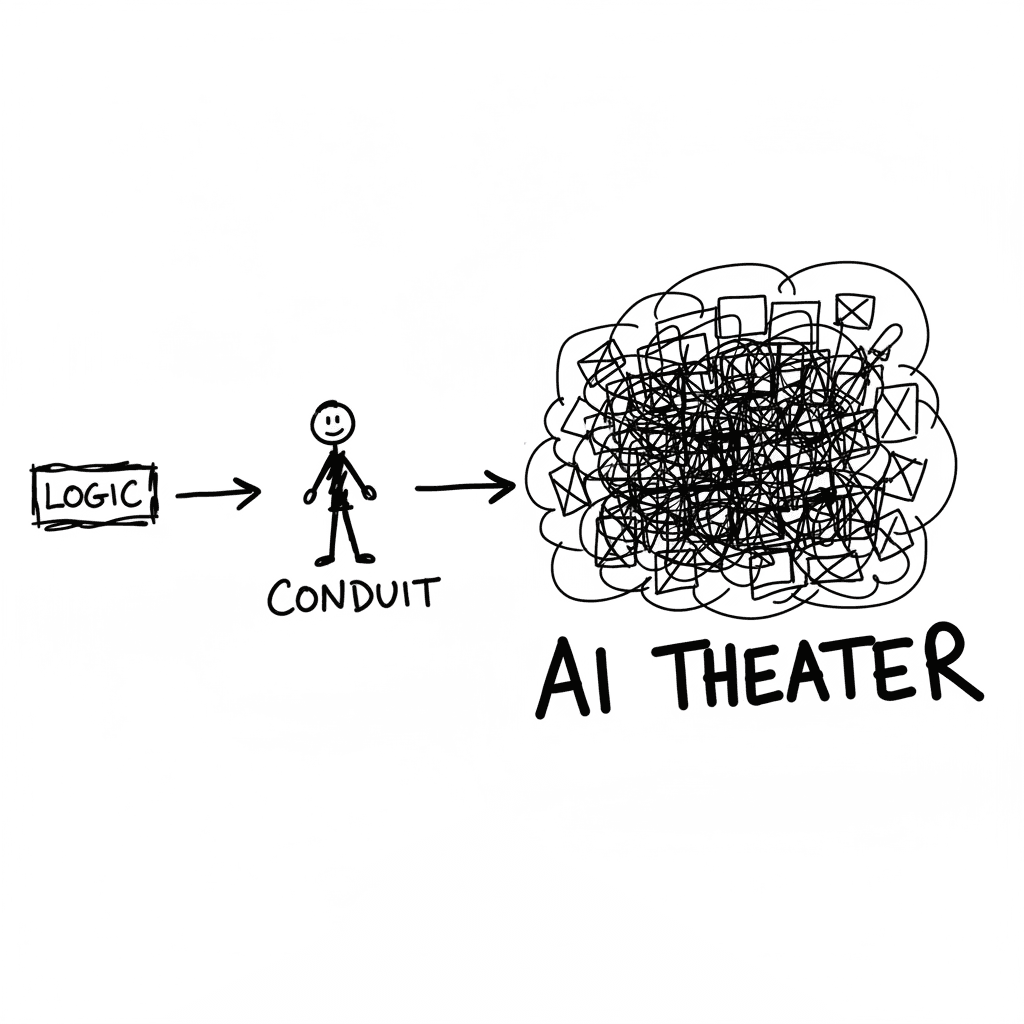

The Conduit Problem: Generative AI and the Hollowing of Technical Expertise

The primary metric for developer productivity in mid-2026 has shifted from logic density to artifact volume, fueled by LLM-driven "elongation" of workplace outputs. This phenomenon, labeled AI Product

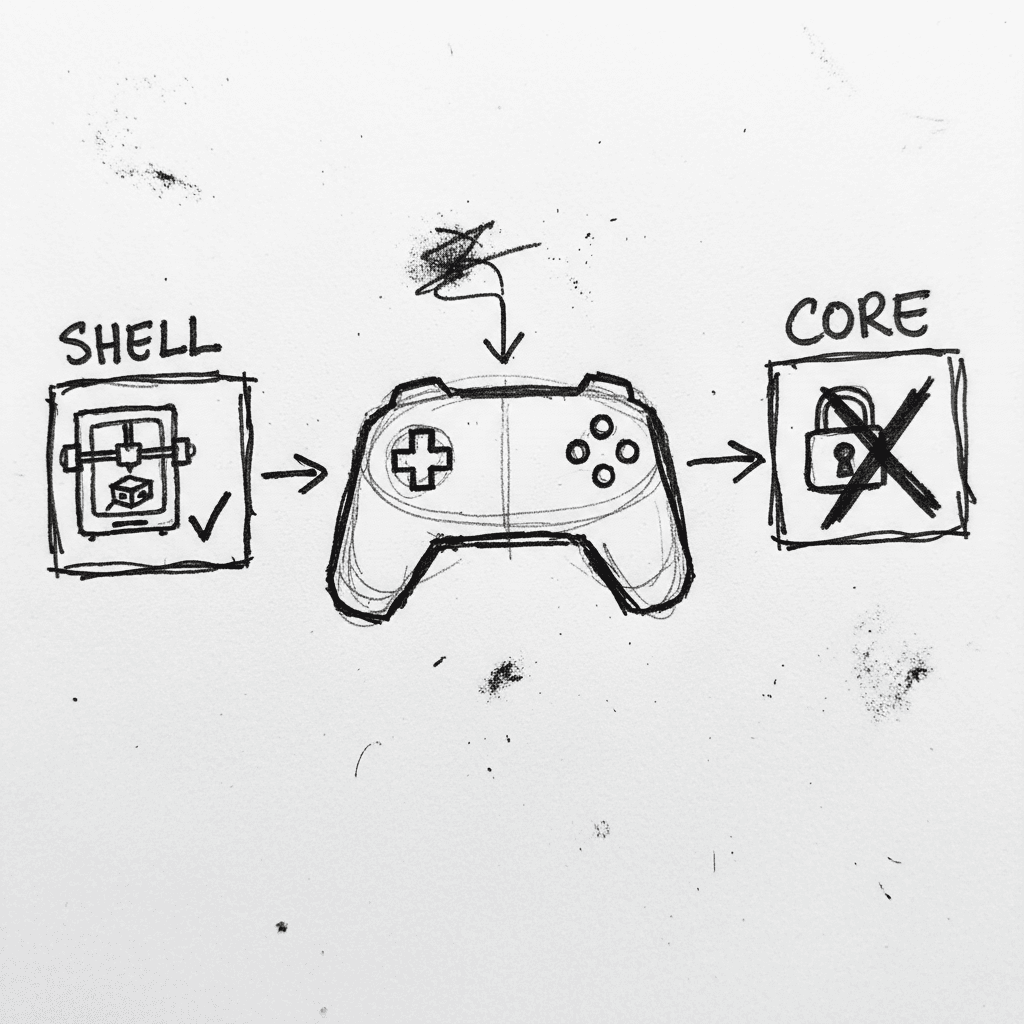

Valve Releases CAD Files for Steam Controller 2026 and Magnetic Puck

Valve has published the full engineering specifications and CAD files for the 2026 Steam Controller shell and its magnetic charging "Puck" on GitLab. (GitLab) This release, licensed under CC BY-NC-SA

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.