Chrome Prompt API and the Vendor Lock-in of On-Device Inference

Google is pushing its Prompt API into Origin Trial for Chrome users on Gemini 2.5 and 3.1 architectures (Blink Intent to Ship, April 2026). The API allows developers to execute LLM tasks like summariz

The Pitch

Google is pushing its Prompt API into Origin Trial for Chrome users on Gemini 2.5 and 3.1 architectures (Blink Intent to Ship, April 2026). The API allows developers to execute LLM tasks like summarization and classification directly on the user's hardware, promising reduced latency and zero server overhead (GitHub). While the premise of "free" inference is tempting, the implementation forces a rigid dependency on Google’s specific model weights and content policies.

Under the Hood

The technical friction lies in what Mozilla calls "model calcification," a state where web applications are tuned specifically for the idiosyncrasies of Gemini Nano (Mozilla standards-positions #1213). Because system prompts are highly model-specific, a prompt optimized for Gemini won't yield predictable results on a potential Firefox implementation running Llama or Claude 4 Sonnet (GitHub #1213). This effectively revives the "Best viewed in Chrome" era under the guise of AI innovation.

Access to the API requires developers to acknowledge Google’s "Generative AI Prohibited Uses Policy," which restricts content generation even for local, on-device processing (Google Dev Policy 2026). This sets a precedent where a browser vendor acts as a global arbiter of local machine output, bypassing user autonomy. Furthermore, the W3C has flagged the WebML API for adding high-entropy vectors to user fingerprinting by exposing specialized NPU hardware parameters (W3C Privacy Review 2026).

We don't know yet whether the safety filters are enforced via a secondary guardrail model or a simpler regex-based implementation. Additionally, Apple’s official stance remains elusive, with the relevant WebKit issue showing no substantive progress beyond community tracking (WebKit #495). The minimum hardware requirements for the upcoming "Nano Banana 2" model also remain unspecified, leaving the baseline for 2026 "AI-ready" browsers unclear.

Marcus's Take

The Prompt API is less of a standard and more of a strategic moat designed to make Gemini the default runtime for the web. By the time a developer finishes fine-tuning prompts to navigate Google's specific "censorship layer," they have effectively locked their frontend into a single vendor's ecosystem. Using this in production today is a gamble on Google's benevolence that historical evidence suggests you will lose. Skip it for anything beyond throwaway prototypes.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

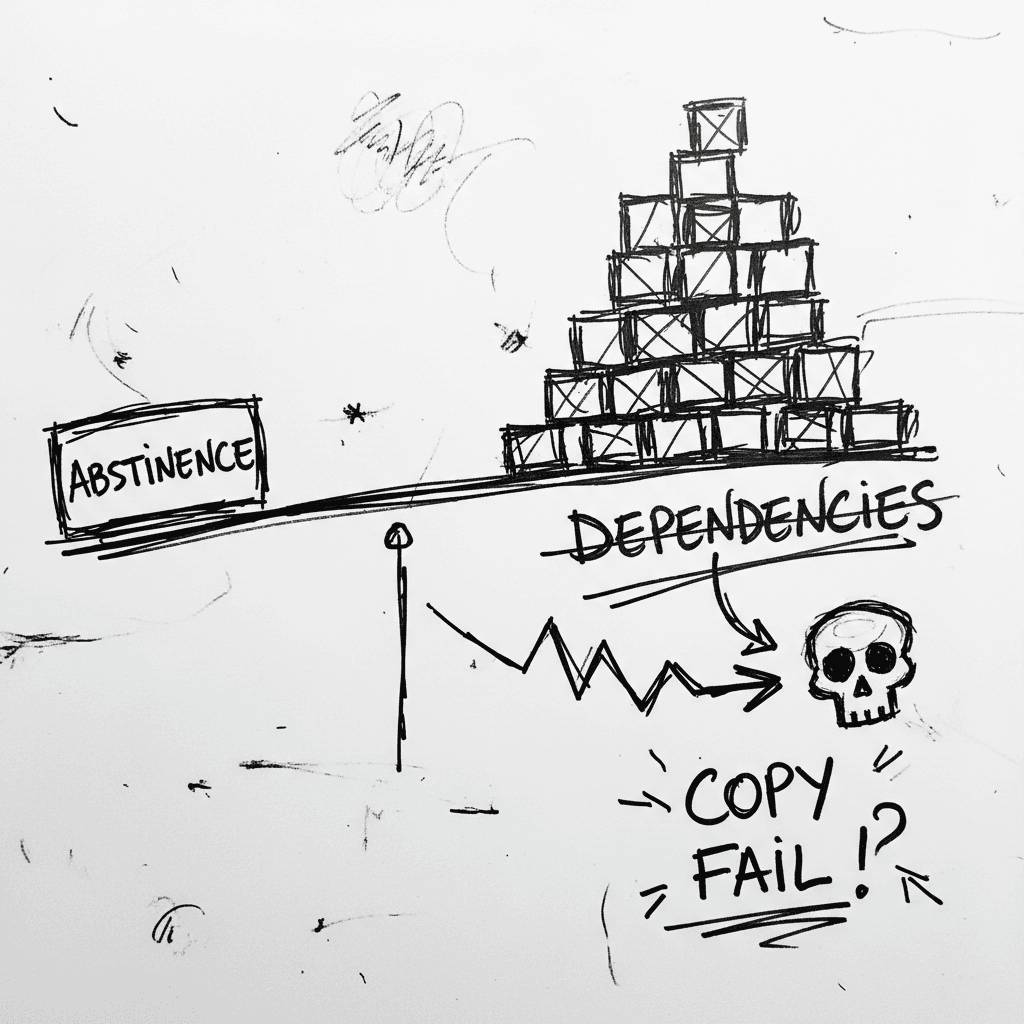

The Linux Kernel ‘Copy Fail’ and the Argument for Software Abstinence

CVE-2026-31431 is a deterministic Linux kernel Local Privilege Escalation (LPE) affecting nearly every major distribution released since 2017 (Source: Palo Alto Networks). Infrastructure authority Xe

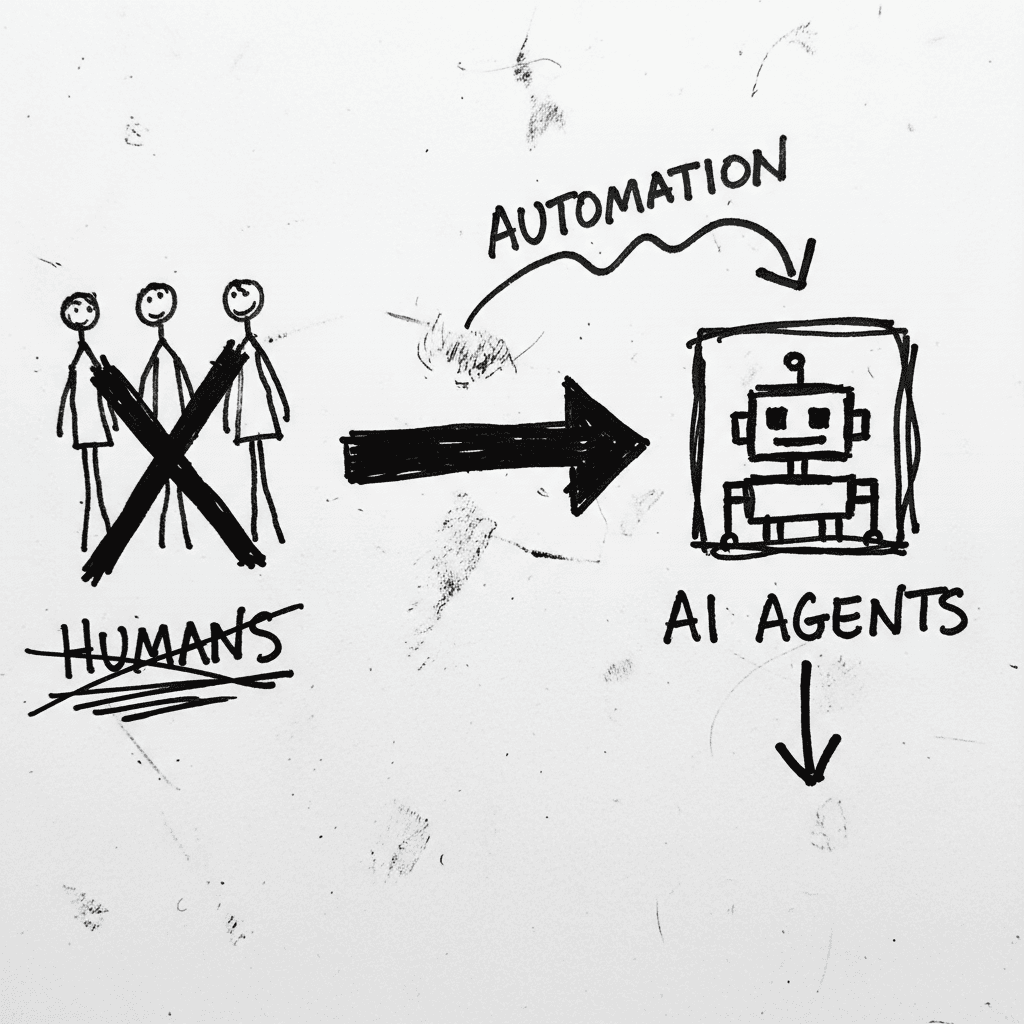

Cloudflare’s Agentic Restructuring and the 20% Workforce Cut

Cloudflare has announced a 20% reduction in its global workforce, citing a pivot to "agentic AI" as the primary driver for operational efficiency. While management claims internal AI agent usage incre

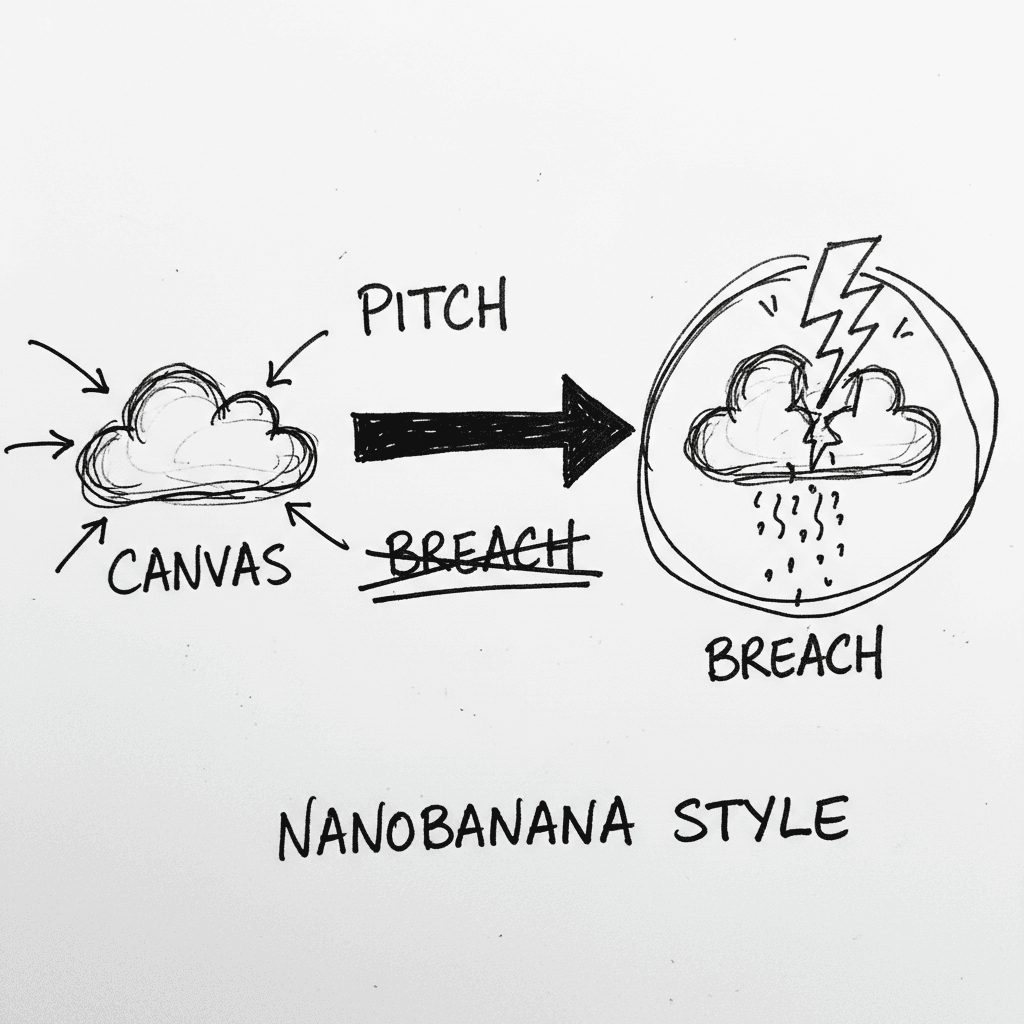

Instructure’s Canvas LMS crippled by nationwide outage and data breach during finals week

Canvas is the dominant Learning Management System (LMS) used by major institutions to centralize curriculum and satisfy ADA accessibility requirements. It is currently the focus of intense scrutiny as

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.