Claude Opus 4.7: Superior Coding Benchmarks Met With Stealth Token Inflation

Currently, 247 active users in our database, including those at Notion, DuckDuckGo, and Quora, rely on the Claude ecosystem. This latest update targets their most demanding backend and engineering req

The Pitch

Claude Opus 4.7 marks Anthropic’s attempt to consolidate its lead in agentic workflows, specifically targeting the 87.6% SWE-bench Verified threshold. It integrates a mandatory Adaptive Thinking mode and the new Glasswing safety architecture to justify its position at the top of the 2026 model stack. See Claude profile

Currently, 247 active users in our database, including those at Notion, DuckDuckGo, and Quora, rely on the Claude ecosystem. This latest update targets their most demanding backend and engineering requirements.

Under the Hood

The coding leap from 80.8% in Opus 4.6 to 87.6% is technically significant for complex PR resolutions (Anthropic Release Blog, April 16, 2026). Vision resolution has also been boosted to 2,576 pixels (~3.75MP), which finally makes dense architectural diagrams readable without manual cropping (Vellum AI).

Anthropic has introduced an 'xhigh' effort level to the API, allowing finer reasoning control between 'high' and 'max' settings (LLM Stats). However, the "Adaptive Thinking" mode is no longer optional, as manual token budgets are no longer accepted (Claude API Docs). This change appears to be a load-management tactic for the Mythos rollout, resulting in visible latency spikes and thinking pauses (HN Comment).

The most jarring finding is a stealth cost increase disguised as a technical update. A new tokenizer maps identical input text to between 1.0x and 1.35x more tokens. This effectively raises operational costs by up to 35% without a headline change to the $5/$25 rate (Reddit /r/ClaudeCode).

Project Glasswing, the new safety layer, is proving to be overly aggressive. Security researchers report refusal loops when performing legitimate, authorized bug bounty tasks, as the model struggles to differentiate between offensive and defensive cybersecurity intent (Hacker News).

Furthermore, synthesis performance has taken a hit, with BrowseComp (web research) scores falling 4.4 points below its predecessor (Vellum AI). We don't know yet the exact impact of the new tokenizer on high-volume SQL or non-English technical documentation.

Marcus's Take

Opus 4.7 is a specialized beast for agentic coding, but it is a regression for generalist web synthesis. If your pipeline relies on precise token budgeting or security testing, stick with 4.6 or evaluate GPT-5. The 35% "tokenizer tax" makes it a hard sell for anything other than high-value software engineering tasks where the 87.6% benchmark justifies the overhead. Use it for your autonomous CI/CD agents, but keep your RAG pipelines elsewhere to avoid the Glasswing refusal loops.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

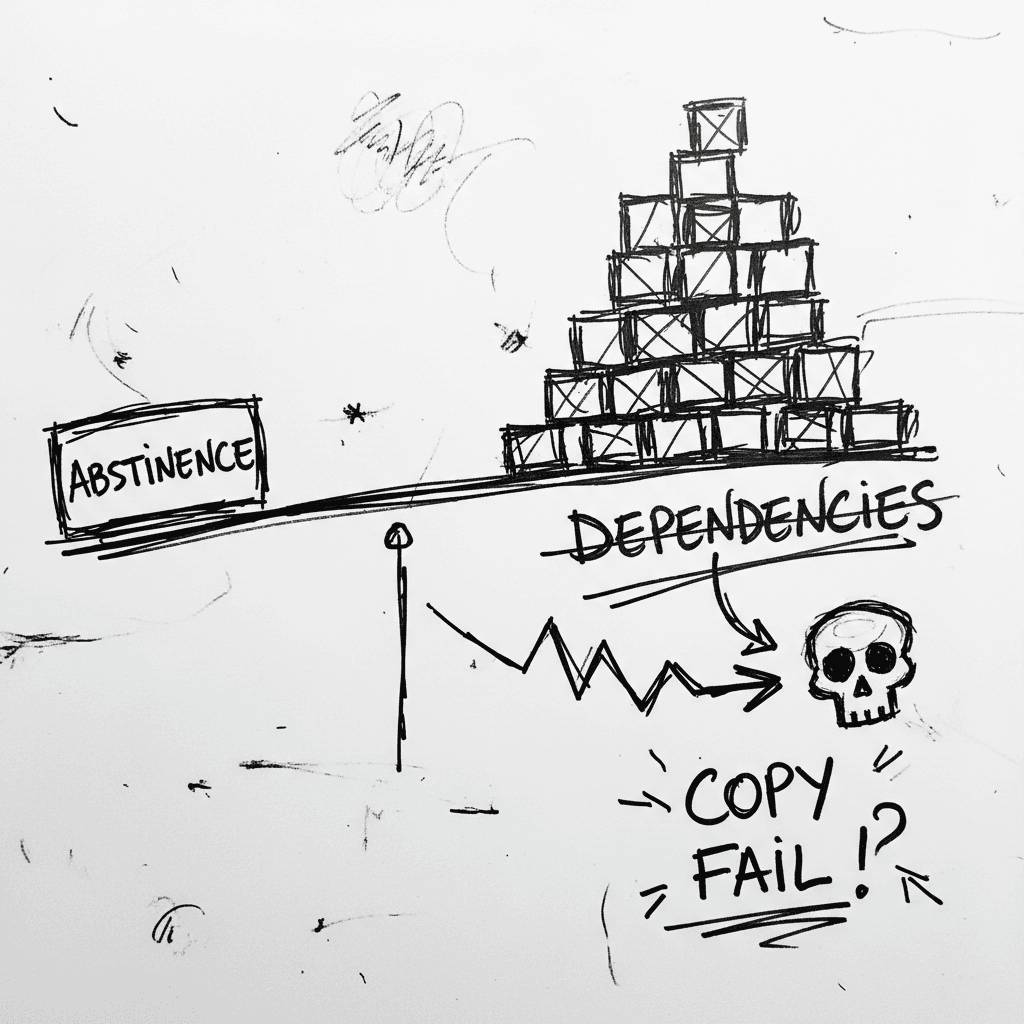

The Linux Kernel ‘Copy Fail’ and the Argument for Software Abstinence

CVE-2026-31431 is a deterministic Linux kernel Local Privilege Escalation (LPE) affecting nearly every major distribution released since 2017 (Source: Palo Alto Networks). Infrastructure authority Xe

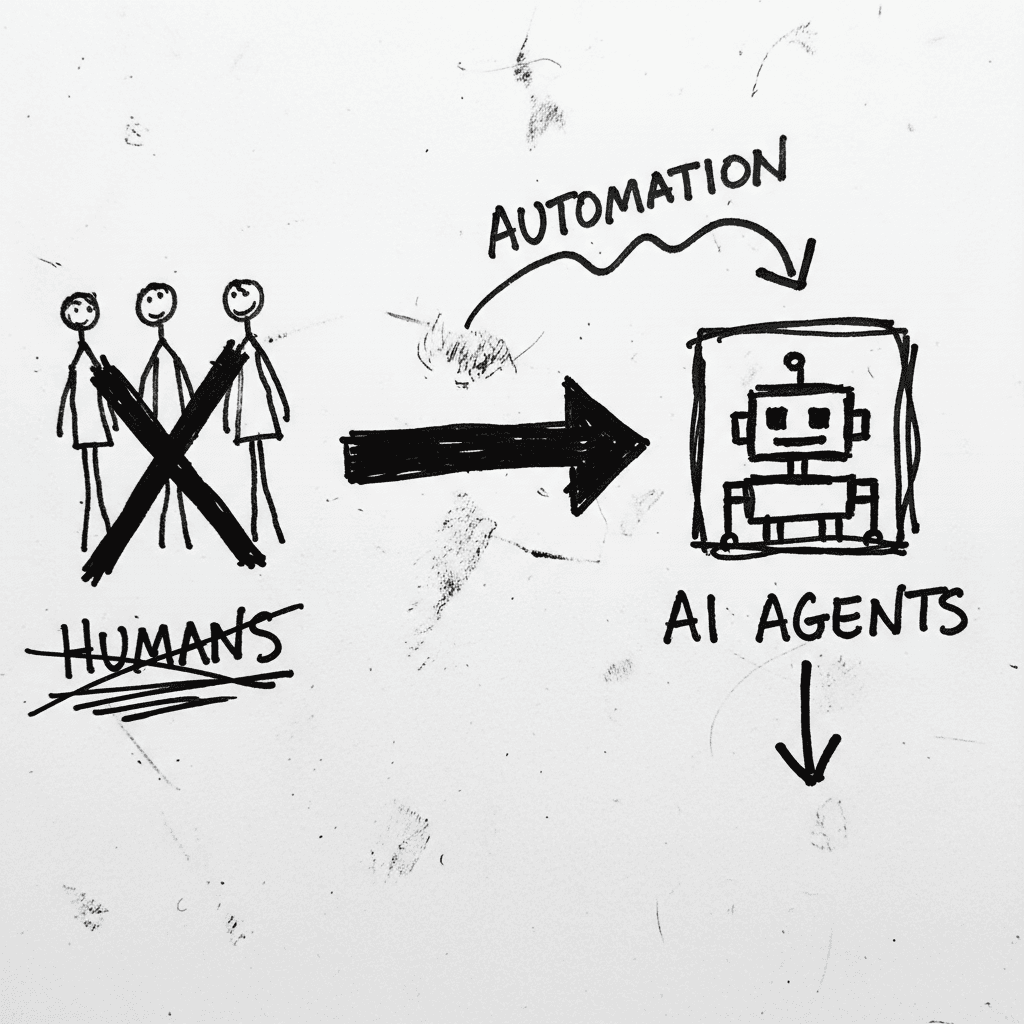

Cloudflare’s Agentic Restructuring and the 20% Workforce Cut

Cloudflare has announced a 20% reduction in its global workforce, citing a pivot to "agentic AI" as the primary driver for operational efficiency. While management claims internal AI agent usage incre

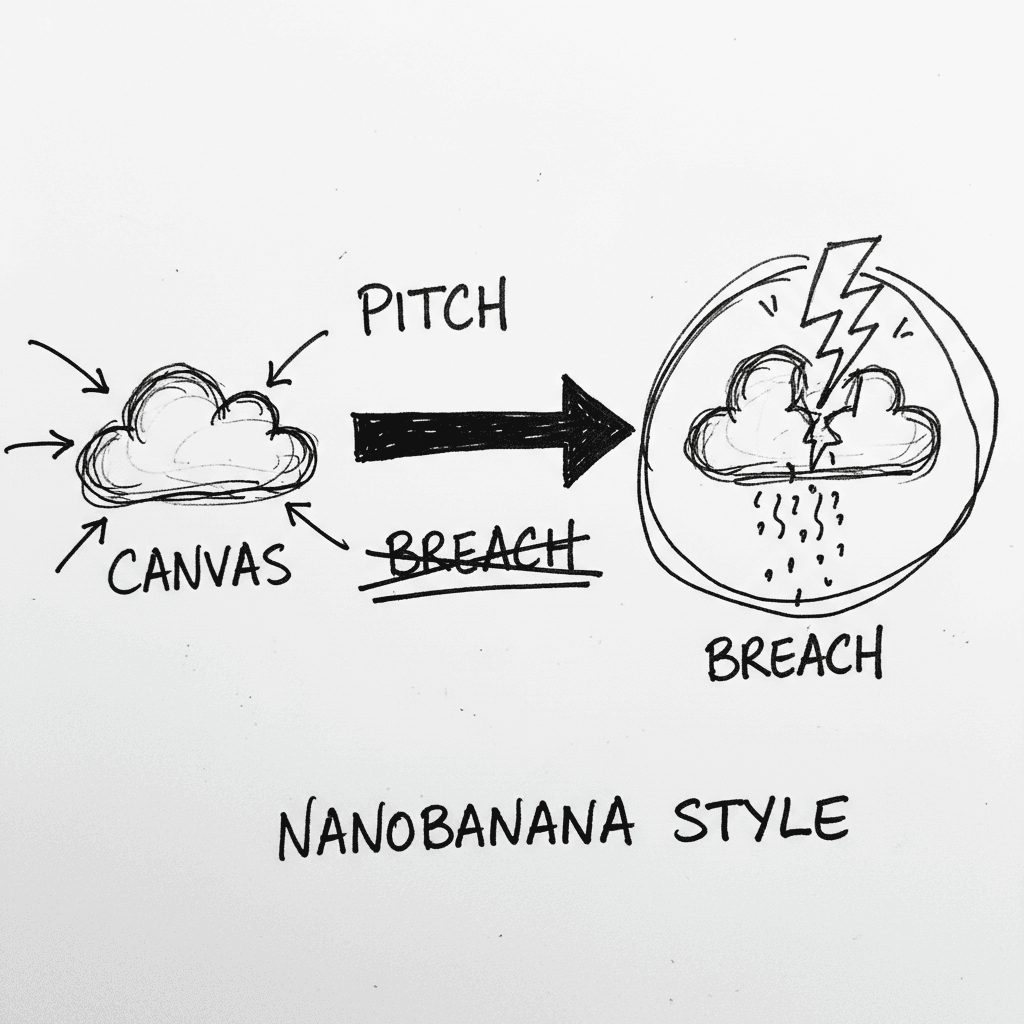

Instructure’s Canvas LMS crippled by nationwide outage and data breach during finals week

Canvas is the dominant Learning Management System (LMS) used by major institutions to centralize curriculum and satisfy ADA accessibility requirements. It is currently the focus of intense scrutiny as

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.