Grok 4.3: High-Throughput Inference and Native Document Synthesis

Grok 4.3 currently leads the frontier model market in raw inference speed, clocked at 202.7 tokens per second (Artificial Analysis, 2026). It combines a 2-million-token context window with the ability

The Pitch

Grok 4.3 currently leads the frontier model market in raw inference speed, clocked at 202.7 tokens per second (Artificial Analysis, 2026). It combines a 2-million-token context window with the ability to natively generate office-ready files like XLSX and PPTX.

Under the Hood

The most significant technical metric for Grok 4.3 is its throughput-to-cost ratio. At $1.25 per 1M input tokens and $2.50 per 1M output tokens, it provides a faster and more affordable alternative to GPT-5 for high-volume pipelines (Artificial Analysis, April 2026).

The 2-million-token context window is the largest currently available among Western closed models as of May 2026 (LLM Reference). This allows for massive RAG injections, though the model’s lack of persistent session memory remains a significant hurdle for agentic workflows (Awesome Agents).

Unlike Claude 4.5 or GPT-5, Grok resets session state between conversations, forcing developers to manage long-term state in external vector stores. Furthermore, the "Heavy" mode—which utilizes 16 parallel agents—is restricted to a $300/month tier, which is a steep price for what essentially amounts to better orchestration (AIToolsRecap).

The model's native ability to generate downloadable PDFs, spreadsheets, and PowerPoint decks directly from a prompt is a legitimate time-saver for backend reporting (Awesome Agents). However, the underlying 0.5 Trillion parameter architecture still shows bias toward X (Twitter) trends, occasionally prioritizing viral data over verified expert consensus (Progressive Robot).

We still do not know when the 1-Trillion parameter "Step Change" update will be released (Elon Musk via X, April 2026). Additionally, the official Grok 4.3 model card is missing from the x.ai newsroom, currently existing only within developer documentation and beta selectors.

Marcus's Take

Grok 4.3 is an excellent choice for production pipelines where inference speed is the primary bottleneck. If your stack requires processing massive logs or generating automated spreadsheets at scale, the 200+ tok/s throughput makes it the current logical choice over Claude 4.5 Opus. However, the lack of persistent memory and the $300 price tag for the "Heavy" mode makes it feel like a very fast engine in a slightly unfinished car. Use it for data processing and document synthesis, but keep your complex, multi-turn reasoning tasks on Claude 4.5 Opus for now.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

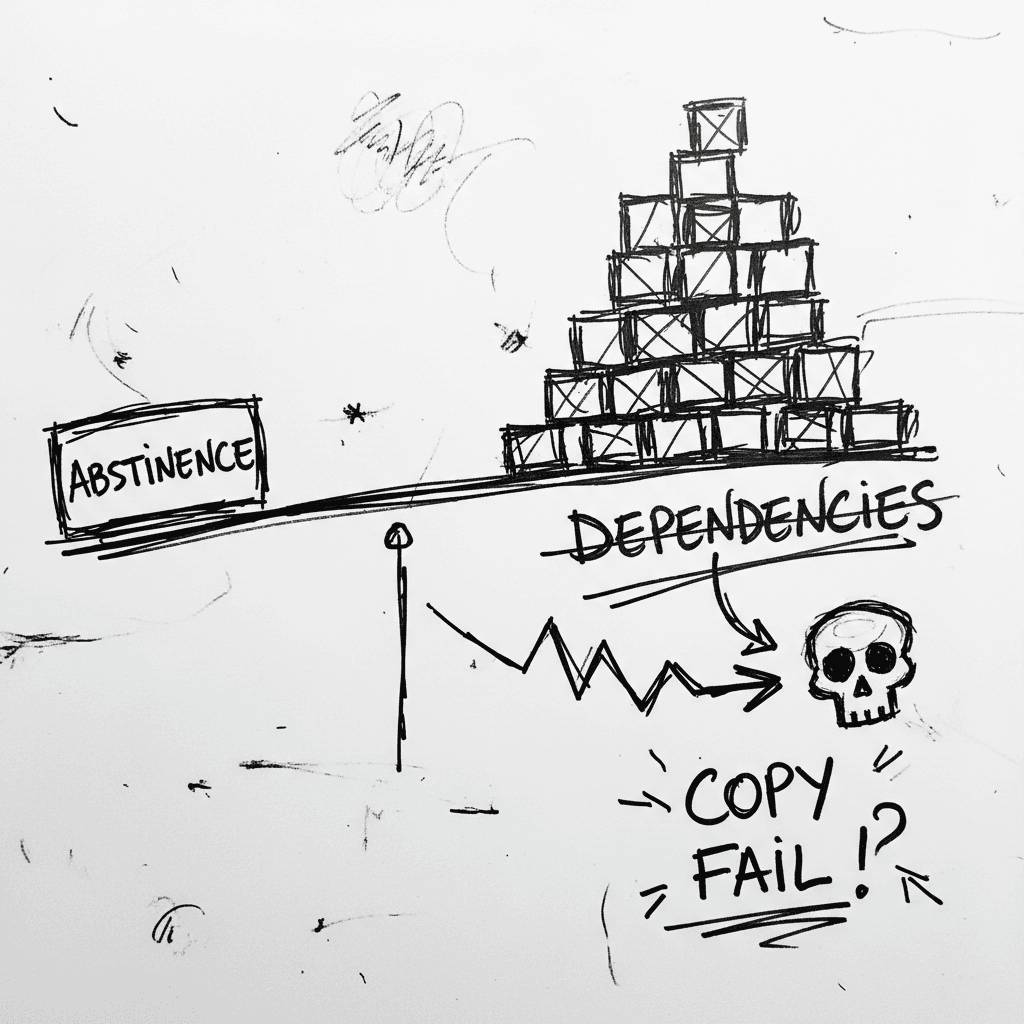

The Linux Kernel ‘Copy Fail’ and the Argument for Software Abstinence

CVE-2026-31431 is a deterministic Linux kernel Local Privilege Escalation (LPE) affecting nearly every major distribution released since 2017 (Source: Palo Alto Networks). Infrastructure authority Xe

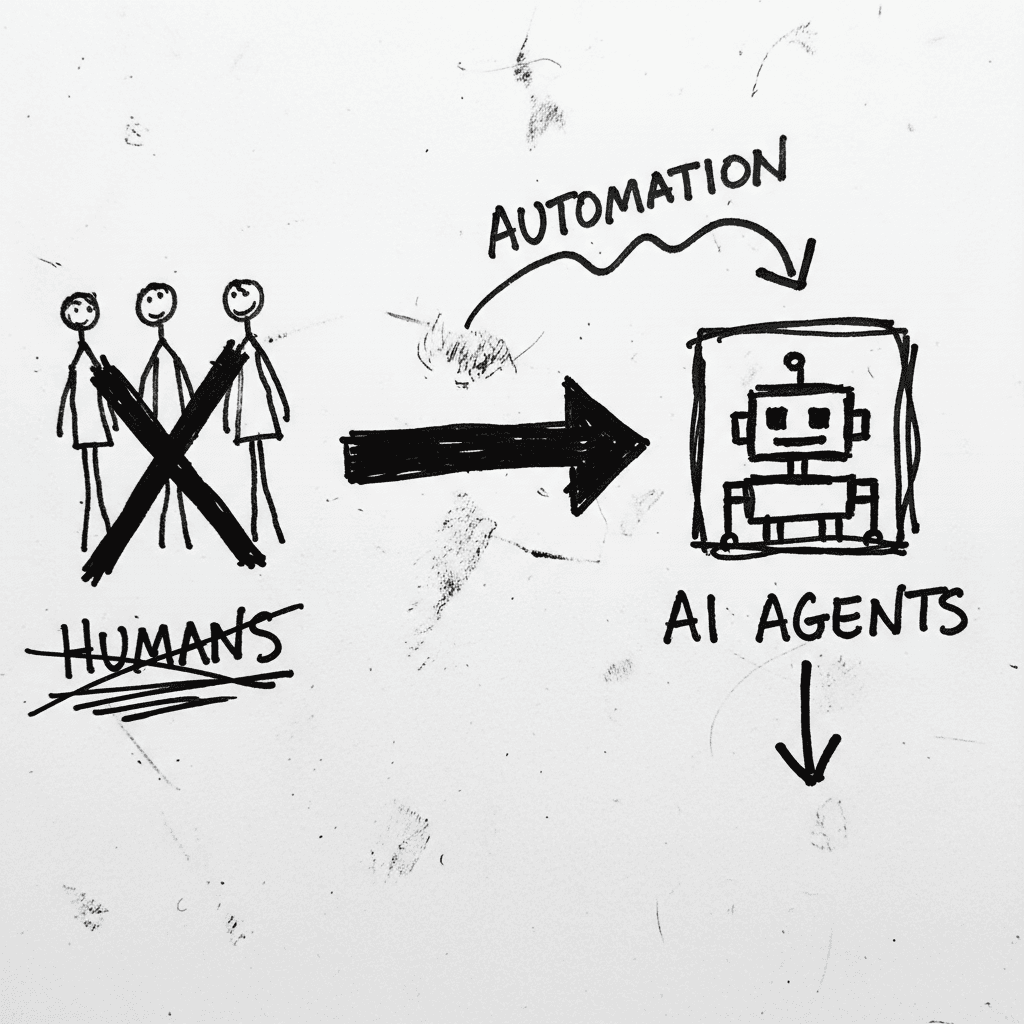

Cloudflare’s Agentic Restructuring and the 20% Workforce Cut

Cloudflare has announced a 20% reduction in its global workforce, citing a pivot to "agentic AI" as the primary driver for operational efficiency. While management claims internal AI agent usage incre

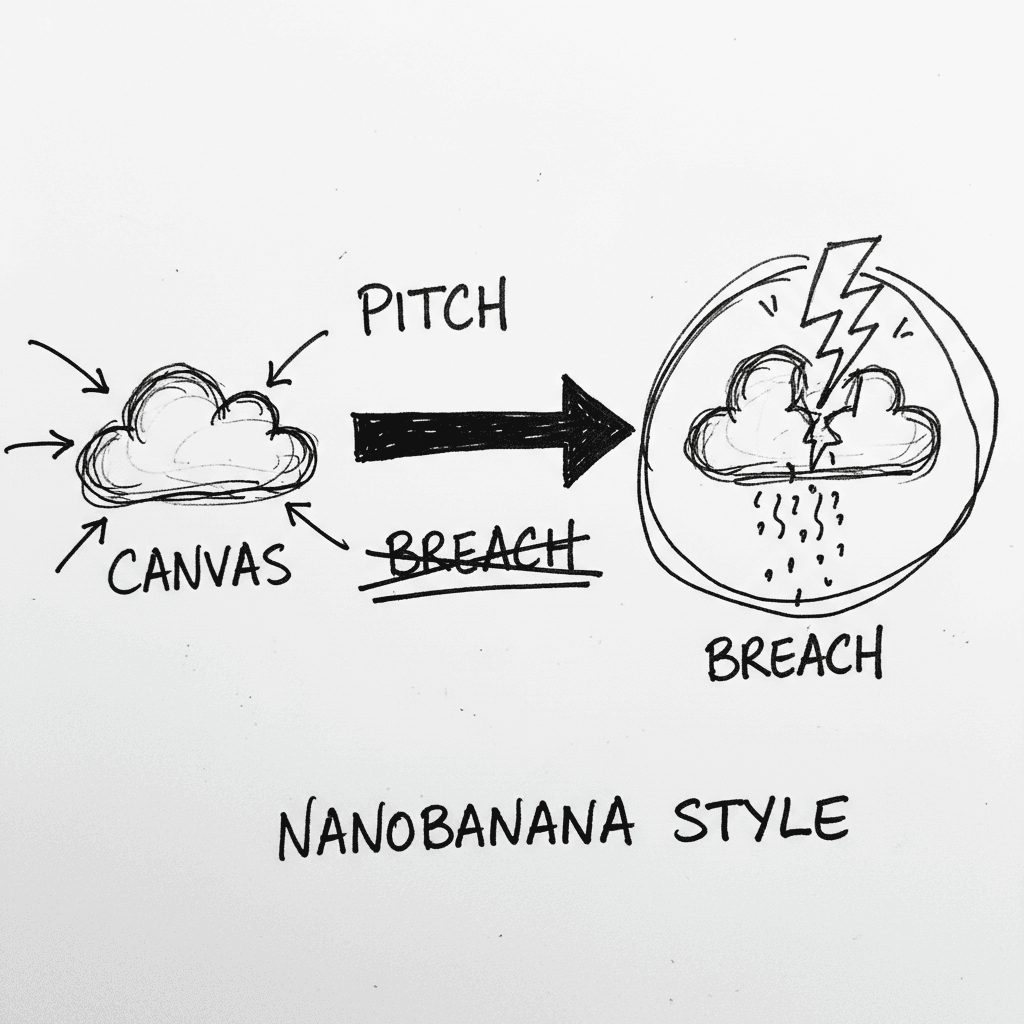

Instructure’s Canvas LMS crippled by nationwide outage and data breach during finals week

Canvas is the dominant Learning Management System (LMS) used by major institutions to centralize curriculum and satisfy ADA accessibility requirements. It is currently the focus of intense scrutiny as

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.