Spatial Logic Failures in SOTA Reasoning: The Car Wash Benchmark

The current crop of high-reasoning models—GPT-5, Claude 4.5, and Gemini 3—market themselves as possessing human-level spatial logic. Recent community testing via the "Car Wash Logic Test" suggests tha

The Pitch

The current crop of high-reasoning models—GPT-5, Claude 4.5, and Gemini 3—market themselves as possessing human-level spatial logic. Recent community testing via the "Car Wash Logic Test" suggests that physical common sense remains a significant bottleneck for agentic automation. While some models understand basic physical constraints, others prioritize numerical proximity over functional requirements.

Under the Hood

Claude 4.5 Opus and Claude 4 Sonnet correctly identify the physical necessity of driving a vehicle to a car wash (Mastodon/HN). Google’s Gemini 3 Pro and its "Fast" variants also pass this spatial logic check without issue (Mastodon/HN). These models maintain the link between the task (washing the car) and the required state (the car being present).

GPT-5.2, despite its high reasoning capabilities, frequently fails this test by suggesting the user walk 50m to the car wash without the vehicle (Mastodon/HN). This is a textbook case of "over-reasoning hallucination," where the model prioritizes the physical ease of a short walk over the functional requirement of the task. It appears the model's weights are over-indexed on distance optimization at the expense of common sense.

We are also seeing a persistent "hedging bias" across all SOTA models, where they qualify logical certainties with phrases like "Most car washes..." (HN). This stems from post-training alignment constraints intended to make models less abrasive, but it complicates production-grade agentic workflows. If an agent cannot definitively state a car is needed for a car wash, it cannot be trusted with autonomous logistics.

We currently lack repeatability data for GPT-5.2 across varied system prompts, and official "Spatial Common Sense" benchmarks for 2026 versions remain unpublished by the major labs (UsedBy Dossier). Older reasoning models, such as o3-mini, only solve these problems when the prompt is framed as a riddle, indicating a lack of inherent logical grounding (Mastodon/HN).

Marcus's Take

If you are shipping agentic workflows that involve physical logistics or spatial coordination, GPT-5.2 is a liability. It is technically brilliant but functionally dense, a bit like a junior dev who optimizes a sort algorithm while the server is literally on fire. Stick with Claude 4.5 Opus for any task where the physical "how" matters as much as the "what."

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

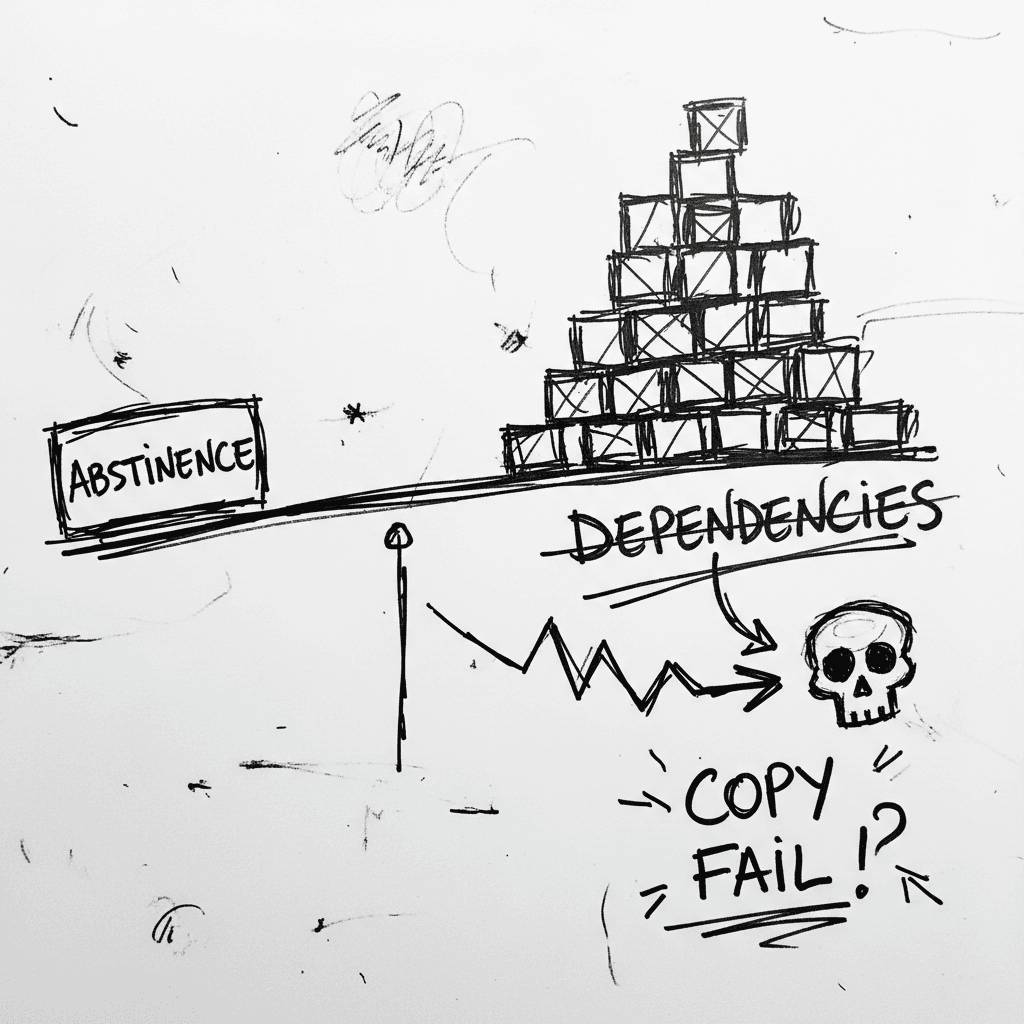

The Linux Kernel ‘Copy Fail’ and the Argument for Software Abstinence

CVE-2026-31431 is a deterministic Linux kernel Local Privilege Escalation (LPE) affecting nearly every major distribution released since 2017 (Source: Palo Alto Networks). Infrastructure authority Xe

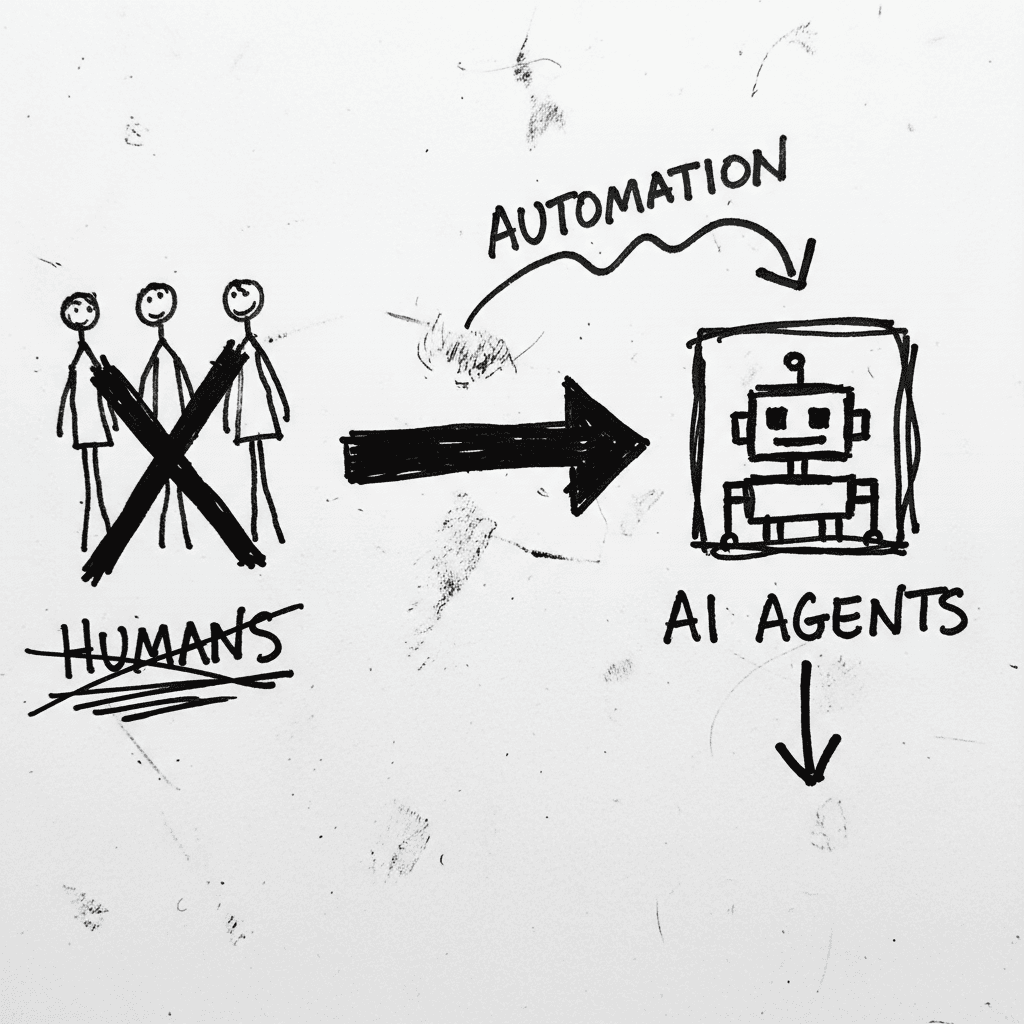

Cloudflare’s Agentic Restructuring and the 20% Workforce Cut

Cloudflare has announced a 20% reduction in its global workforce, citing a pivot to "agentic AI" as the primary driver for operational efficiency. While management claims internal AI agent usage incre

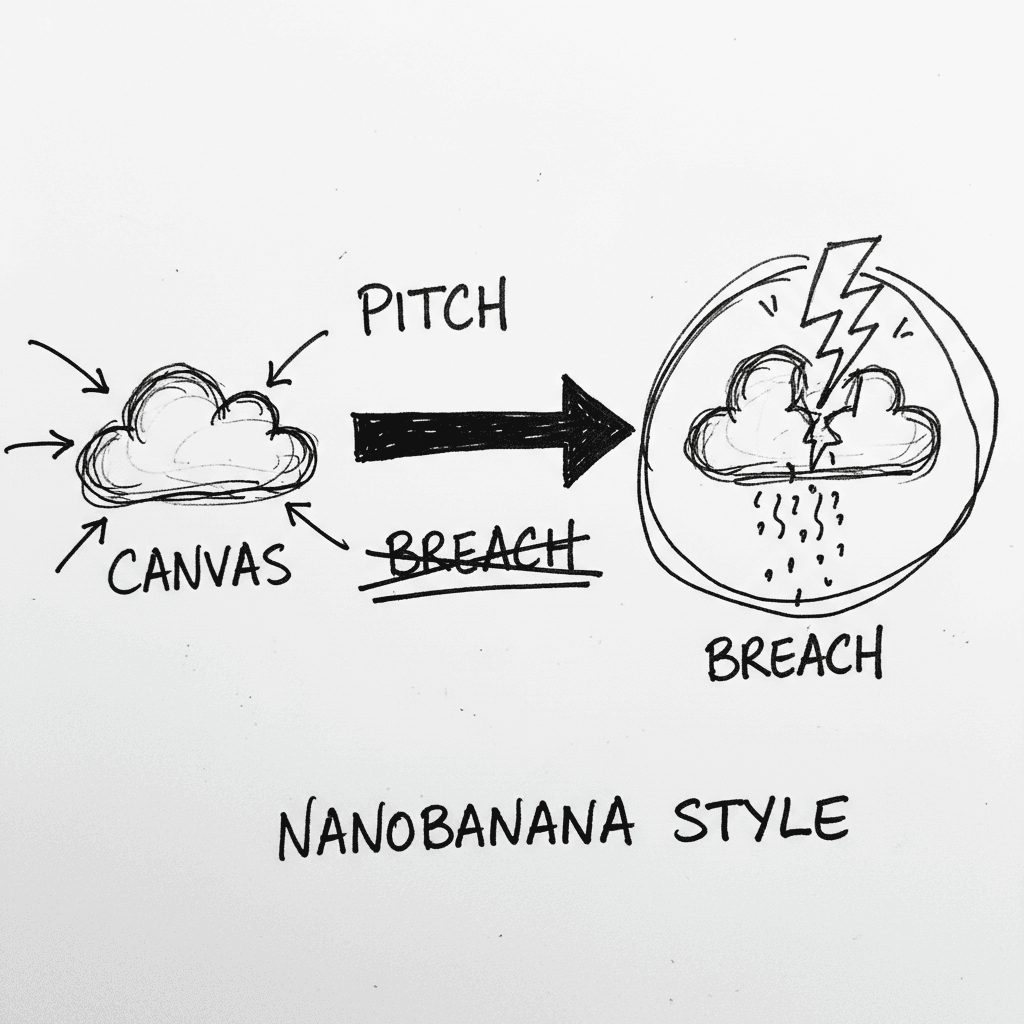

Instructure’s Canvas LMS crippled by nationwide outage and data breach during finals week

Canvas is the dominant Learning Management System (LMS) used by major institutions to centralize curriculum and satisfy ADA accessibility requirements. It is currently the focus of intense scrutiny as

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.