News Audit and the 49MB Page Load Benchmark

News Audit (webperf.xyz) is a leaderboard tracking the technical degradation of mainstream news sites through real-world performance metrics. It provides developers with raw data to justify optimizati

The Pitch

News Audit (webperf.xyz) is a leaderboard tracking the technical degradation of mainstream news sites through real-world performance metrics. It provides developers with raw data to justify optimization efforts to non-technical stakeholders (Source: webperf.xyz).

Under the Hood

A single article load on The New York Times was recently audited at 49MB and 422 network requests (Source: thatshubham.com). This is not an edge case but a result of "a thousand small incentive decisions" where marketing teams bypass engineering entirely. Non-technical staff use Google Tag Manager (GTM) to inject scripts directly into production without code review (Source: HN).

The programmatic ad auctions occurring in-browser now function like high-frequency financial markets. These auctions drain user battery and data before a single paragraph of content is rendered (Source: News Audit). It is a significant security risk, as partner scripts are pushed to production without developer oversight (Source: HN).

The webperf.xyz platform itself required robust infrastructure to survive its viral launch in 2026. Cloudflare’s edge caching handled 19.24 GB of bandwidth with a 98.5% hit ratio (Source: HN). This suggests that even a tool dedicated to tracking bloat requires heavy lifting to serve its data to a skeptical audience.

Users are already reacting to this technical debt by abandoning bloated UIs for AI-driven "clean reading" tools. GPT-5 summary agents are increasingly used to strip away frontend noise, threatening the traditional ad-supported business model (Source: HN). It seems the industry has finally found a way to make 49MB of JavaScript irrelevant by simply not loading the page at all.

We currently lack specific data on the exact financial correlation between specific bloat milestones, such as crossing 30MB, and user churn (UsedBy Dossier). Furthermore, the long-term sustainability and funding model for the webperf.xyz project remain unclear (UsedBy Dossier).

Marcus's Take

The technical failure here is systemic, not accidental. You cannot "refactor" your way out of a 49MB page when marketing has a backdoor via GTM. Use webperf.xyz as ammunition in your next sprint planning, but do not expect a leaderboard to fix a broken organizational culture. If your newsroom's site is on this list, your users have likely already switched to GPT-5 text-only summaries.

Ship clean code,

Marcus.

Marcus Webb - Senior Backend Analyst at UsedBy.ai

Related Articles

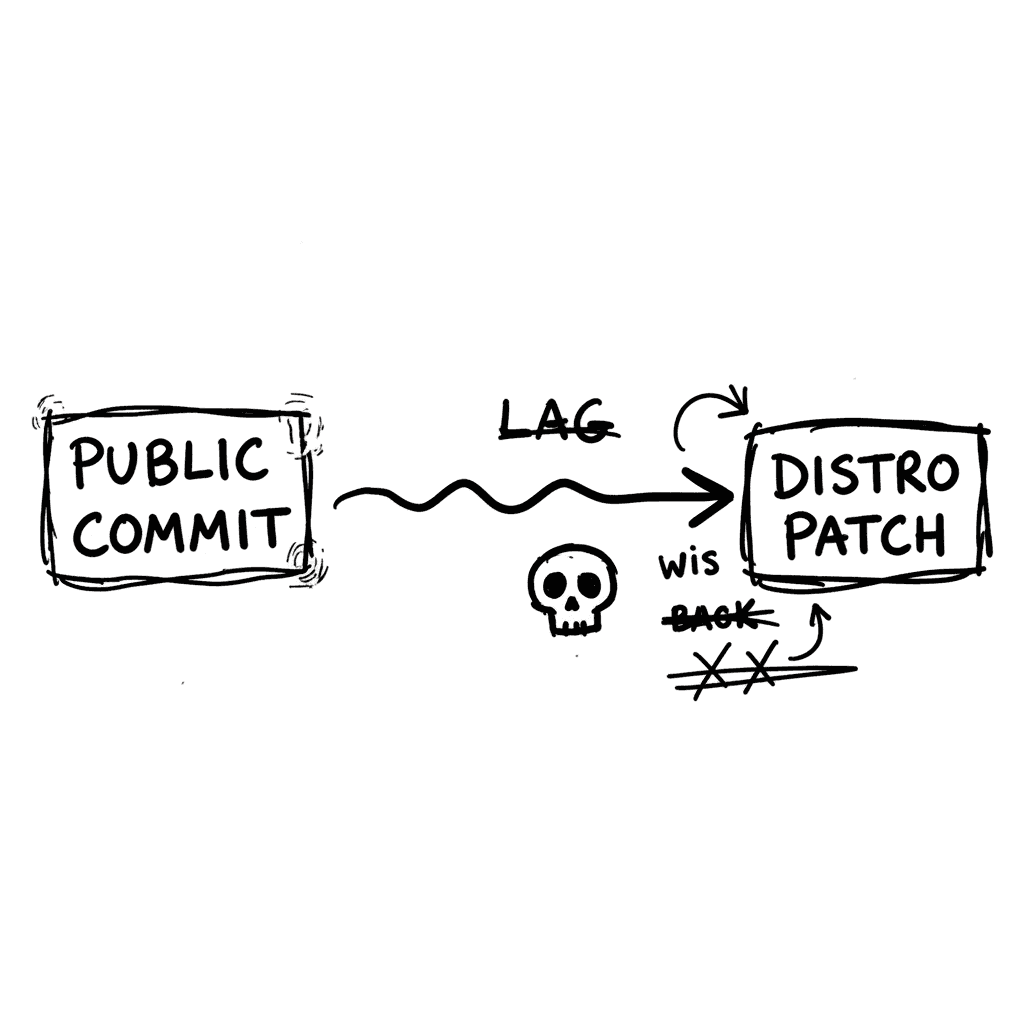

Linux Kernel Security Disclosure Protocol and CVE-2026-31431 Technical Analysis

The Linux kernel security team prioritizes immediate public commits over downstream coordination, creating a systemic "security lag" for major distributions. This policy ensures that fixes reach publi

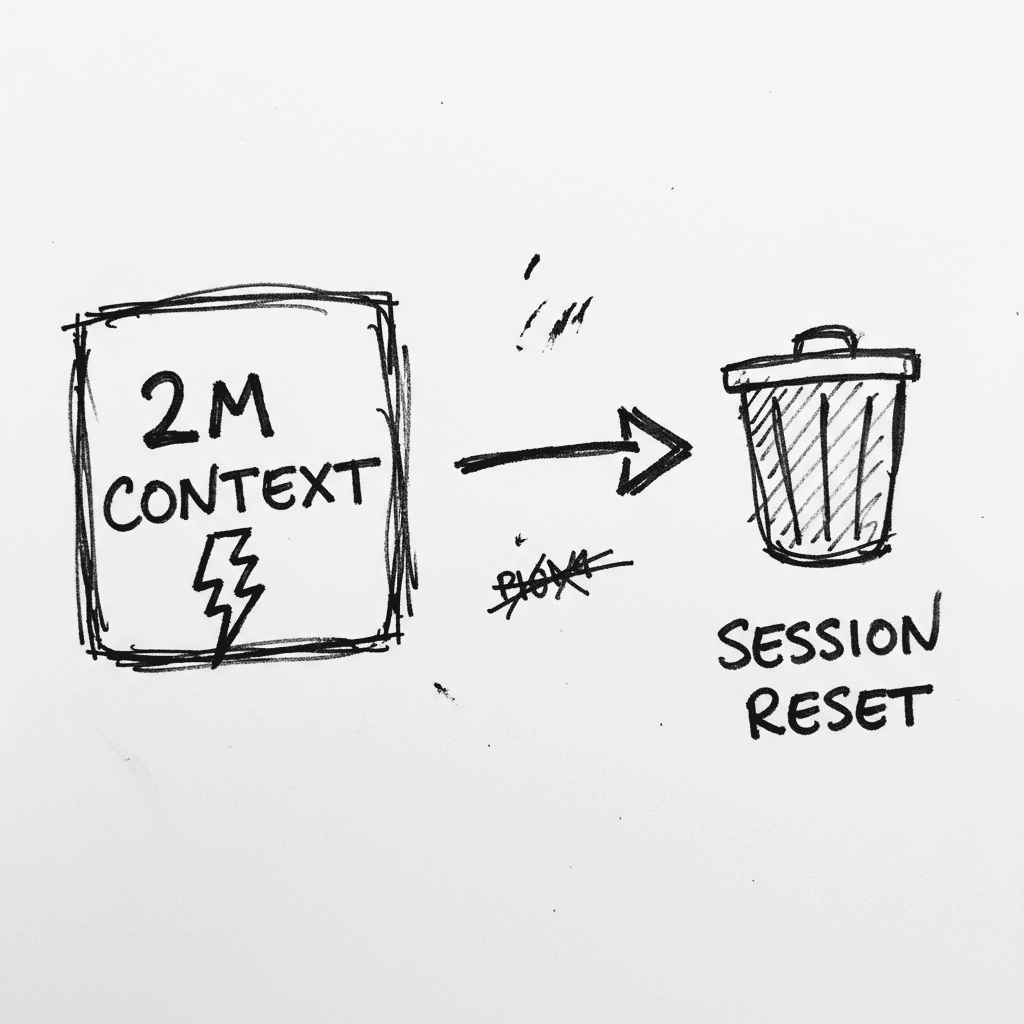

Grok 4.3: High-Throughput Inference and Native Document Synthesis

Grok 4.3 currently leads the frontier model market in raw inference speed, clocked at 202.7 tokens per second (Artificial Analysis, 2026). It combines a 2-million-token context window with the ability

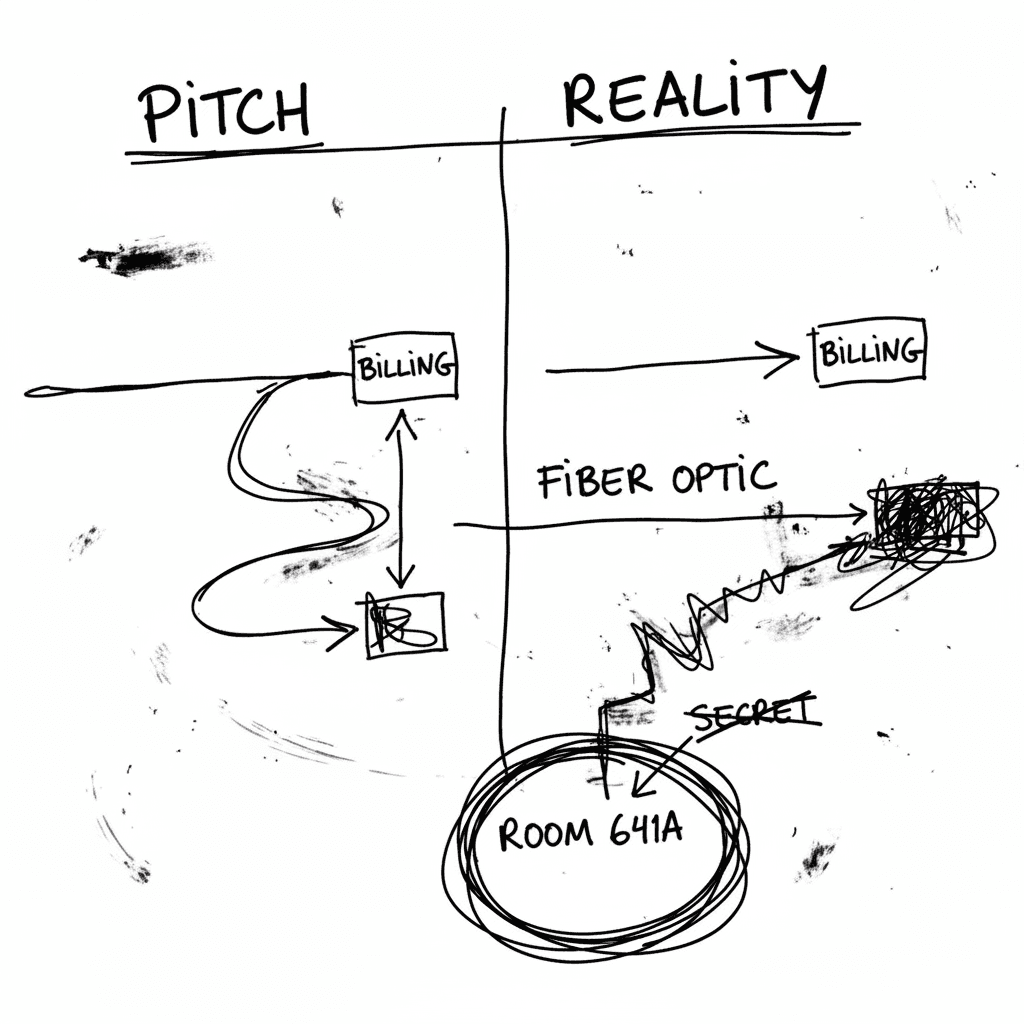

Hardware-Level Surveillance: The Narus STA 6400 and Room 641A

Narus marketed the STA 6400 as a "Semantic Traffic Analyzer" for carrier-grade billing and traffic classification. Mark Klein’s leaked documentation proved it was actually the engine for a "country ta

Stay Ahead of AI Adoption Trends

Get our latest reports and insights delivered to your inbox. No spam, just data.